LLM gateway: overview, benefits, and top platforms

As you build AI applications, you’ll need to use several LLMs intelligently to avoid unnecessary costs, poor outputs, and minimize latency.

Implementing effective LLM routing logic, however, is easier said than done. That’s why there’s an entire market of 3rd-party platforms dedicated to supporting this functionality.

We’ll review the best LLM gateways. But first, let’s align on how they work, why they’re important, and when you need to use one.

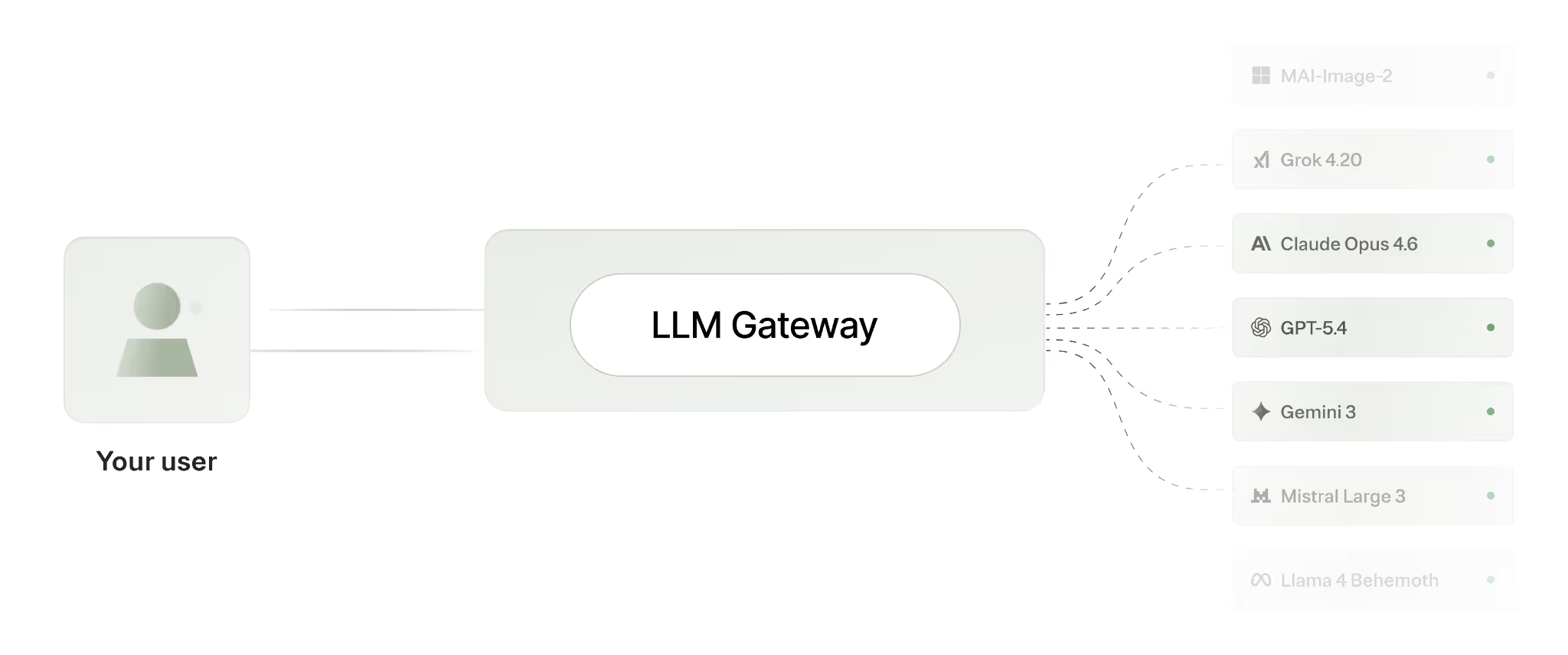

LLM gateway overview

An LLM gateway is a 3rd-party platform that lets you route every user request to the best-fit model. Based on your goals, your routing logic can be driven by cost optimization, speed, or reliability.

While it depends on the provider, LLM gateways typically include the following functionality:

- Routing logic: decide how requests should be routed. This often requires picking your preferred models in order. If one fails, the next preferred option is used by default

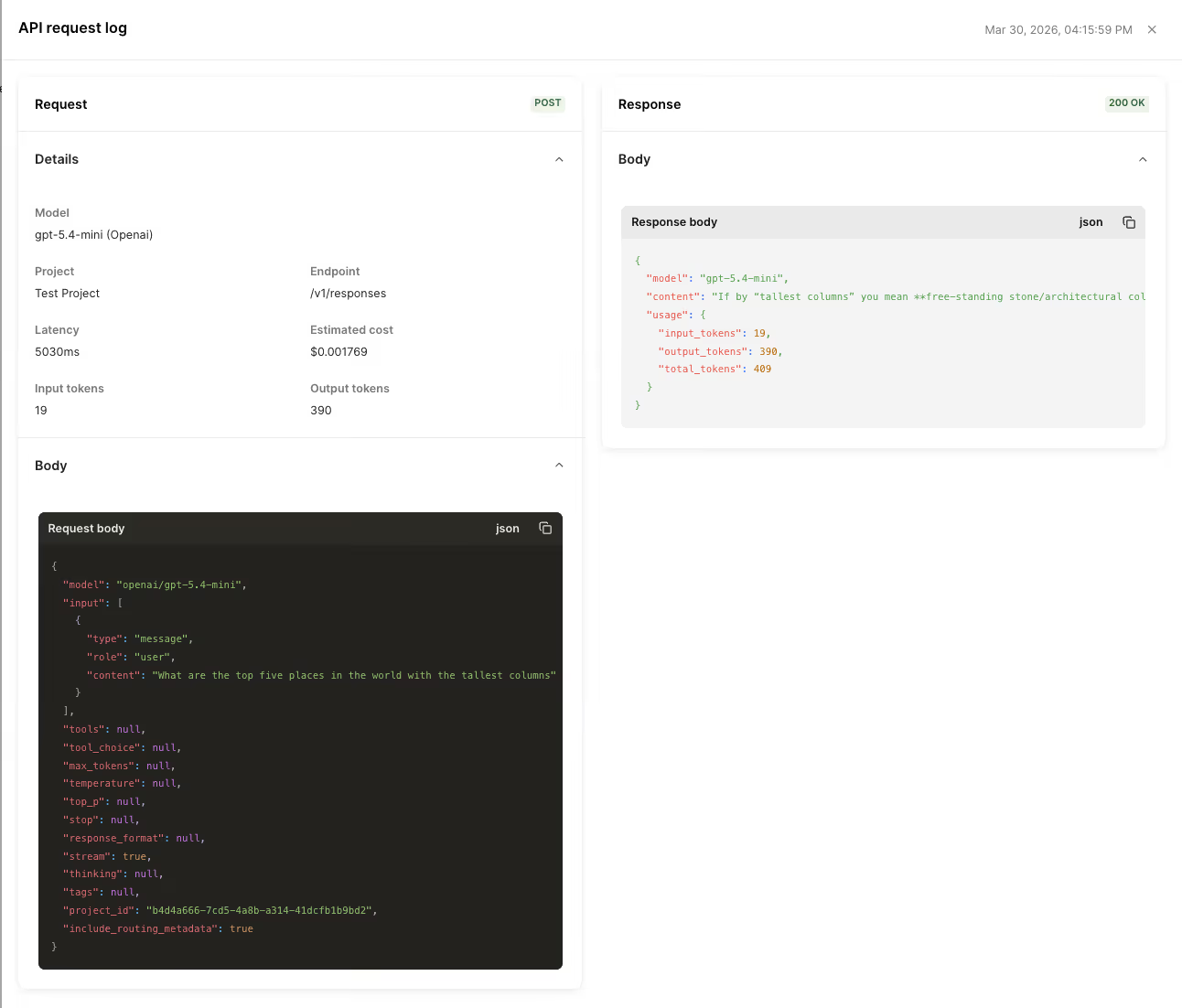

- Usage and spend tracking: track how much you’re spending on each model and model provider. Some gateways even let you create custom projects and tags to help teams drill down further

- Observability: access logs with details like when a request occurred, the model used, the number of tokens consumed, the latency, etc.

- Test simulators: send the same prompt to multiple models and compare their responses, latency, and cost side by side to help you determine the best one to use for a given scenario

Related: A guide to LLM routing

Key benefits of using LLM gateways

There’s a wide range of reasons to use LLM gateways. Here are some of the top ones to consider:

- Avoid building and maintaining provider-specific integrations: every new model means new auth, params, edge cases, and ongoing maintenance. An LLM gateway gives one consistent interface across providers so switching/adding models doesn’t require repeated app changes

- Leverage failover logic: outages/rate limits can degrade or take down AI features unless you’ve built routing and fallback yourself (which is incredibly time and resource intensive). Gateways centralize and enforce routing policies and automatic fallback so traffic can reroute to healthy models over time

- Manage spend policies: spend can be opaque until invoices arrive, and budgets/limits can be hard to enforce in real time. Gateways solve this with budget enforcement, attribution, and controls (e.g., by team) at the infrastructure layer

- Optimize costs without bespoke engineering: reducing token waste and duplicate calls can become a set of one-off app optimizations. A gateway lets you apply cost-saving mechanisms, like context compression and semantic response caching

- Centralize observability: request logs, routing decisions, latency, and cost data are fragmented across providers and services. LLM gateways offer request-level visibility in one place for easy debugging and optimization

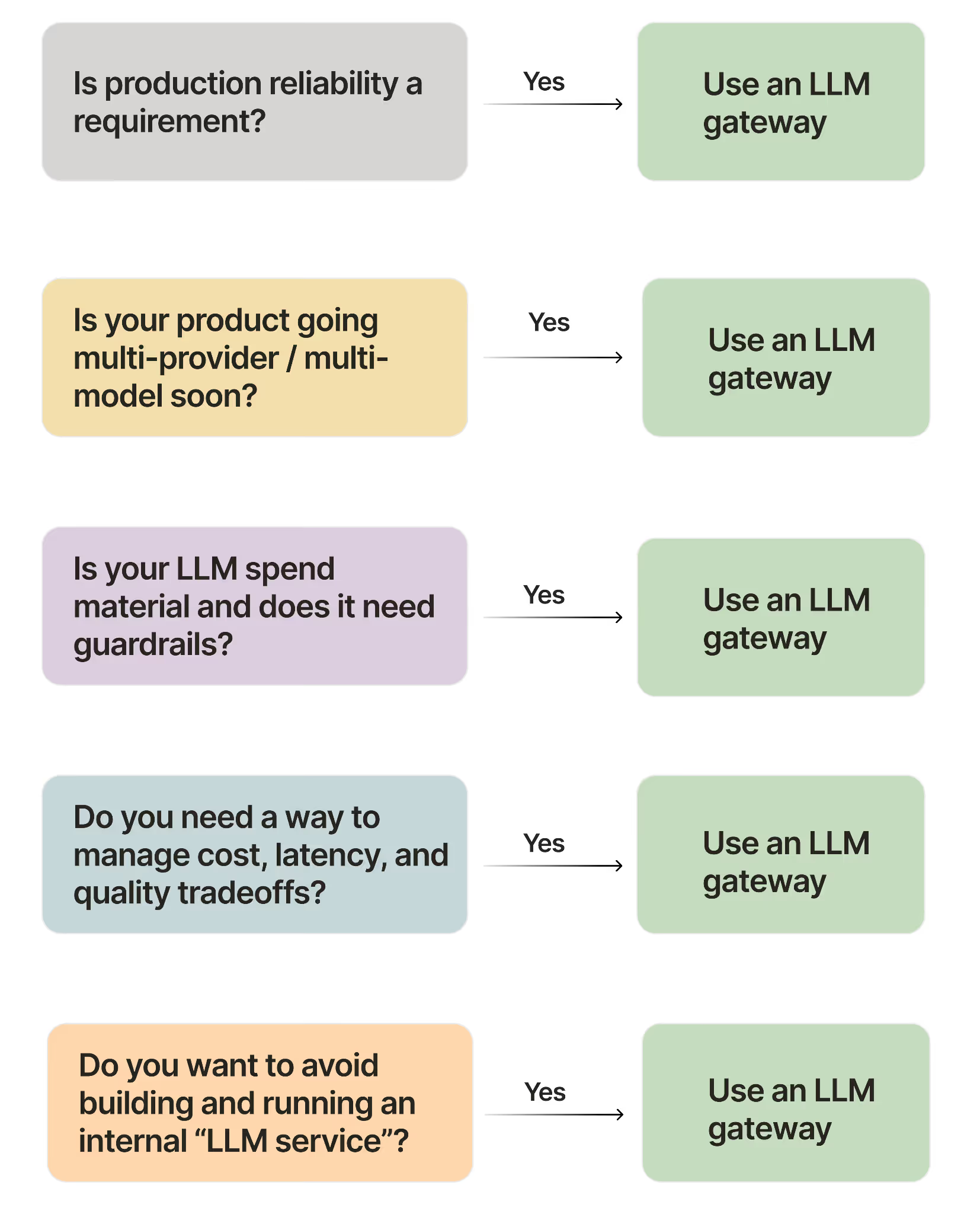

When to use an LLM gateway

Just because you’re building an AI product that uses LLMs doesn’t mean you have to use an LLM gateway. The benefits highlighted above may not be enough to offset the costs of using this type of platform.

Before going into the LLM gateway solutions you should consider (see next section), here’s some general guidance on whether you should use one in the first place.

- Production reliability is a requirement. In other words, you can’t afford to have outages/rate limits take features down

- You’re going multi-provider / multi-model (or expect to soon). You’ll want to avoid lock-in and repeated integration work as models change

- LLM spend is material and needs guardrails. You need real-time visibility and budgets/enforcement (e.g., by project) to prevent surprise costs and protect margins

- You need a consistent way to manage cost, latency, and quality tradeoffs over time. This means routing policies that work per workload/request, not hardcoded model choices that go stale

- You don’t want to build and run an internal “LLM service.” You’re looking to avoid the engineering and ops burden of stitching together provider integrations, routing/fallback, budgeting, logs, and guardrails

Related: How to implement a zero data retention (ZDR) gateway

Best LLM gateway solutions

Here are the LLM gateway providers you should evaluate first.

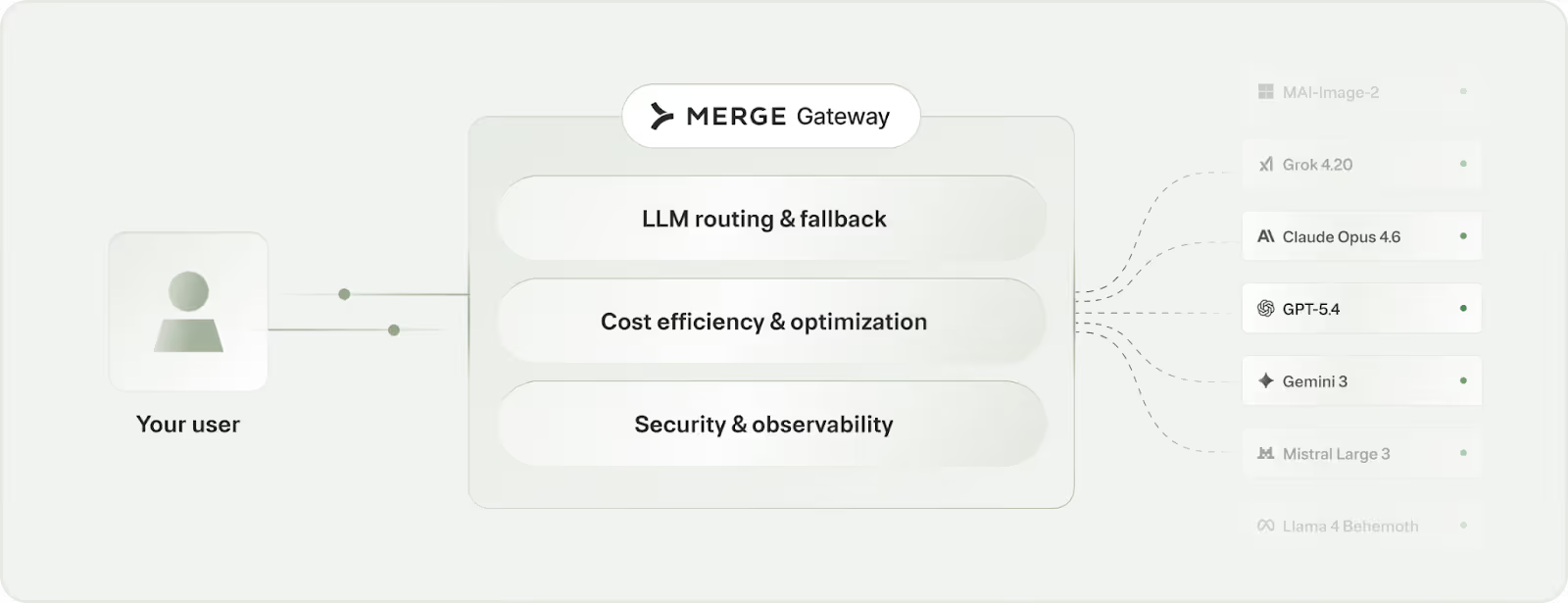

Merge Gateway

Merge Gateway is the control plane for production AI systems. It sits between your application and LLM providers to centralize model access plus how requests are routed, governed, and monitored in production.

Pros

- Unified access to any LLM: Simply integrate once to a single API to access every LLM

- Routing and automatic fallback: This keeps your product up and performing as needed despite provider outages and degradations

- Cost governance and optimization: Access real-time budgets and controls, and reduce spend with mechanisms like context compression and caching

- Request-level observability and centralized governance: Get visibility into requests, routing decisions, and cost outcomes; and use a consistent governance layer over AI usage

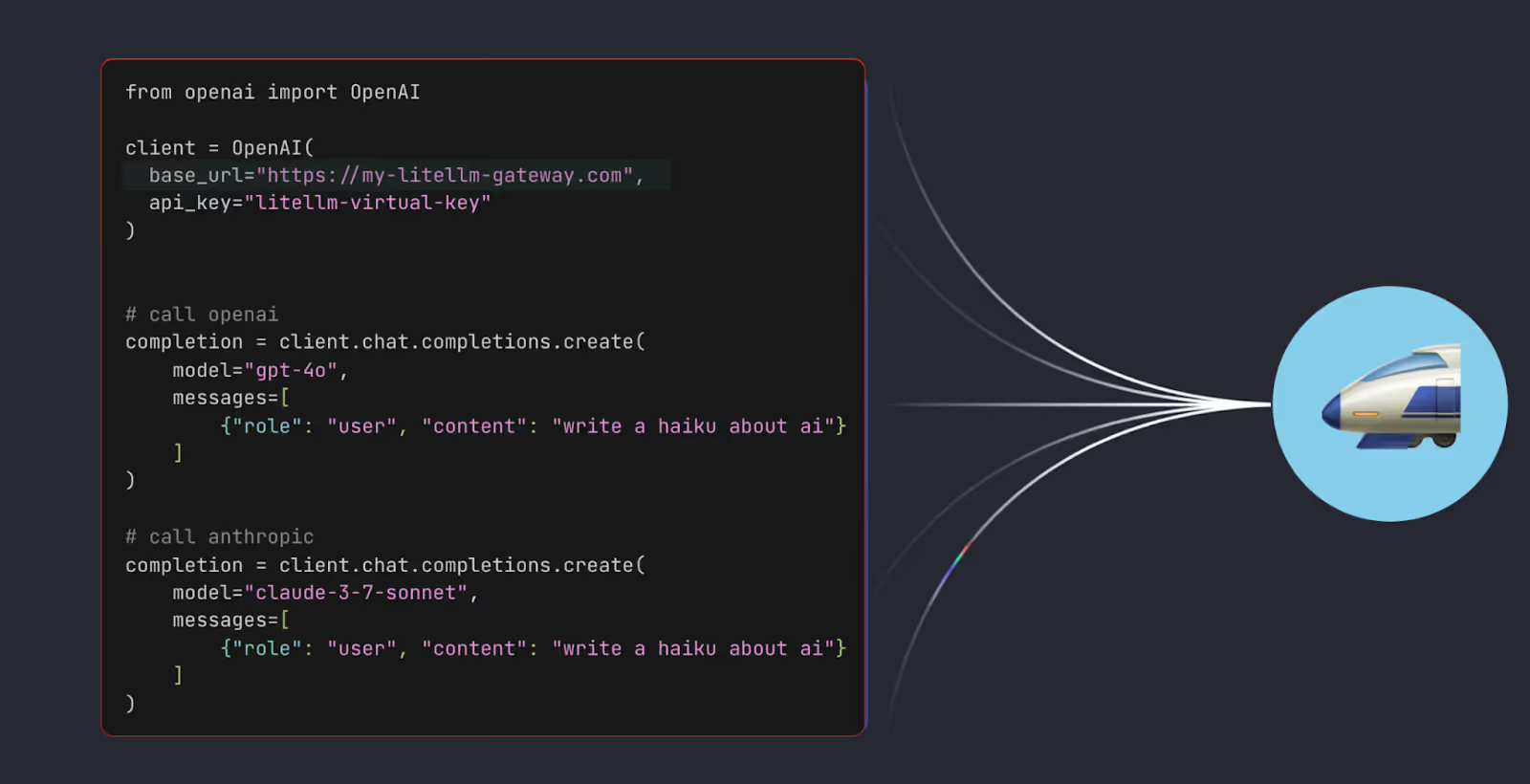

LiteLLM

LiteLLM is a lightweight, OpenAI-compatible proxy/gateway that teams can self-host to route requests across LLM providers.

Teams primarily use it for provider/model mappings and routing. If you use it as your standalone gateway, you’re effectively running your own “tier-0” internal service.

Pros

- Maximum control and customizability: you can deploy it in your own environment and tailor routing policies/logging/integrations to your stack

- Flexible routing logic: you can route to internal/private models as well as external providers

- Developer-friendly gateway building block: you can drop the OpenAI-compatible “lightweight proxy” behind existing OpenAI SDK-based code with minimal changes, then incrementally add multi-provider routing and other gateway logic without rebuilding your app’s LLM integration from scratch

Cons

- Setup and maintenance/DevOps overhead: running your own gateway means deploying, updating, and monitoring it

- Operational risk: any outages, misconfigurations, or scaling issues in your LiteLLM deployment can degrade or take down every AI feature behind it

- Requires in-house expertise/support: when you need new functionality or something breaks, you’re responsible for understanding how it works, debugging issues, and maintaining fixes

Related: The top LiteLLM alternatives

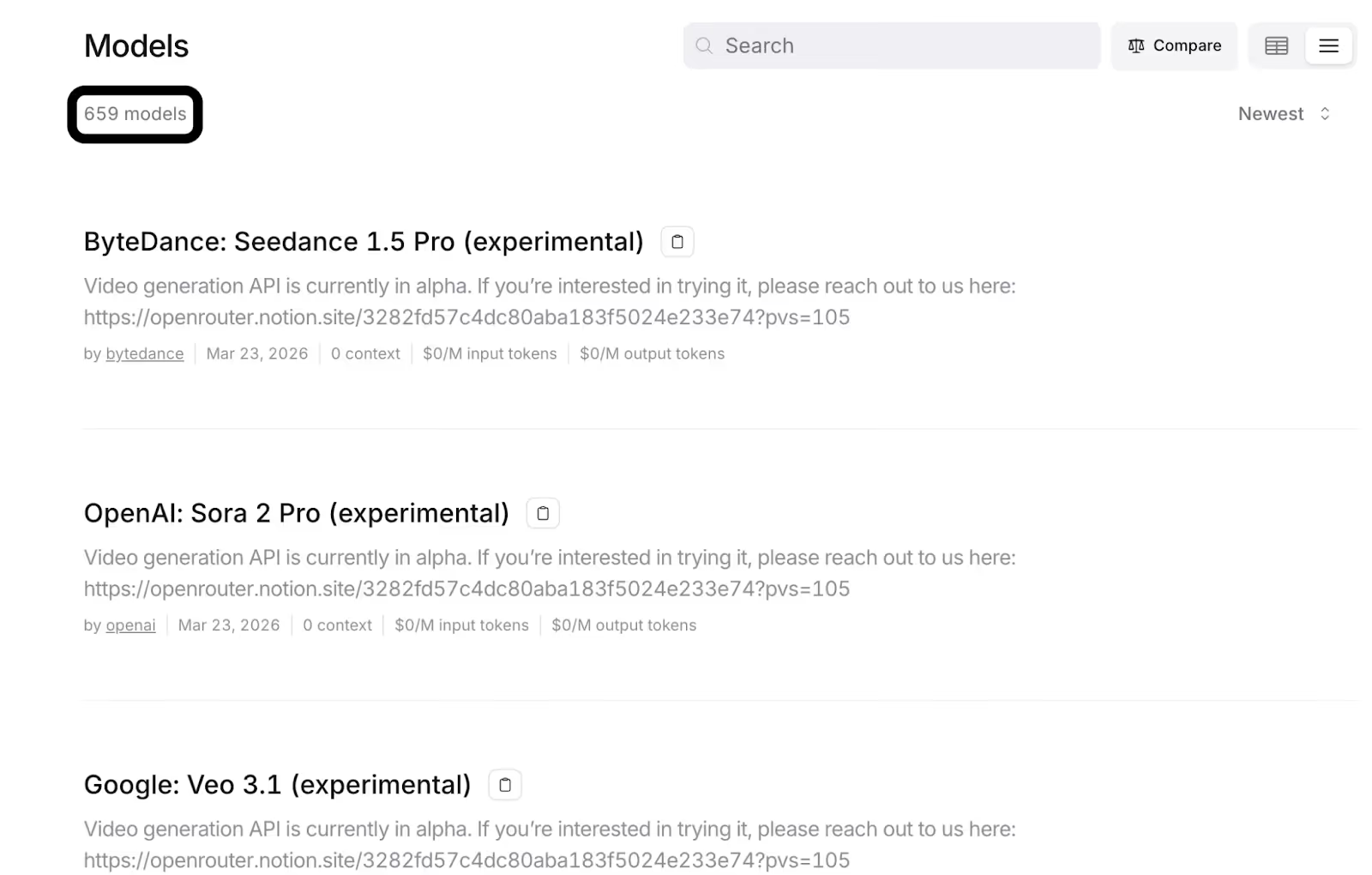

OpenRouter

OpenRouter is a unified, LLM gateway that lets developers access many LLMs through a single endpoint, with built-in routing and failover across providers.

The main difference between OpenRouter and LiteLLM is that OpenRouter is a hosted, managed multi-model gateway you use as a third-party service; LiteLLM is a lightweight, OpenAI-compatible proxy you typically self-host and operate yourself

Pros

- Single API across models and providers: you can easily switch between models and experiment through one integration point

- Built-in routing and fallbacks: this improves uptime and, similar to Merge Gateway, lets you automatically try the next preferred model when one becomes unavailable

- Cost management tools: you can, for example, use prompt caching to reduce token spend/latency when prompts repeat on supported providers

Related: A guide to OpenRouter alternatives

Cons

- Less control than self-hosting: since OpenRouter is a managed platform, you can’t fully tailor the underlying infrastructure (e.g., deployment, deep custom behavior, or internal-only extensions) the way you can with a self-hosted gateway like LiteLLM

- Cost and behavior tradeoffs: Since OpenRouter may route or fail over across providers, the backend (and, as a result, effective price and response consistency) can vary between requests. They may also markup some models for the managed convenience

- Analytics/observability limits: Their built-in reporting is useful, but you’ll need deeper production-grade governance/controls, like real-time budget enforcement

{{this-blog-only-cta}}

.avif)

.png)

%20(6).png)

.png)