Every model.

One API. Total control.

Access all LLMs through a single API, with intelligent routing, cost management, and security built-in

Your control plane for production AI

Gateway connects your app to every LLM provider. Route requests, control costs, enforce security, and monitor every interaction to ship faster and with confidence.

From pilot to production in minutes

Connect

Add one line of code and start calling any major model immediately

Configure

Set routing policies, budget limits, and security guardrails or start with defaults

Monitor

Track every request, routing decision, and cost outcome in real time. Then optimize what's next.

One line of code. Every major model. Always on. Even when providers aren’t.

Replace multiple vendor contracts, API keys, and integrations with one interface and relationship. Your products stays reliable and efficient even when providers fail.

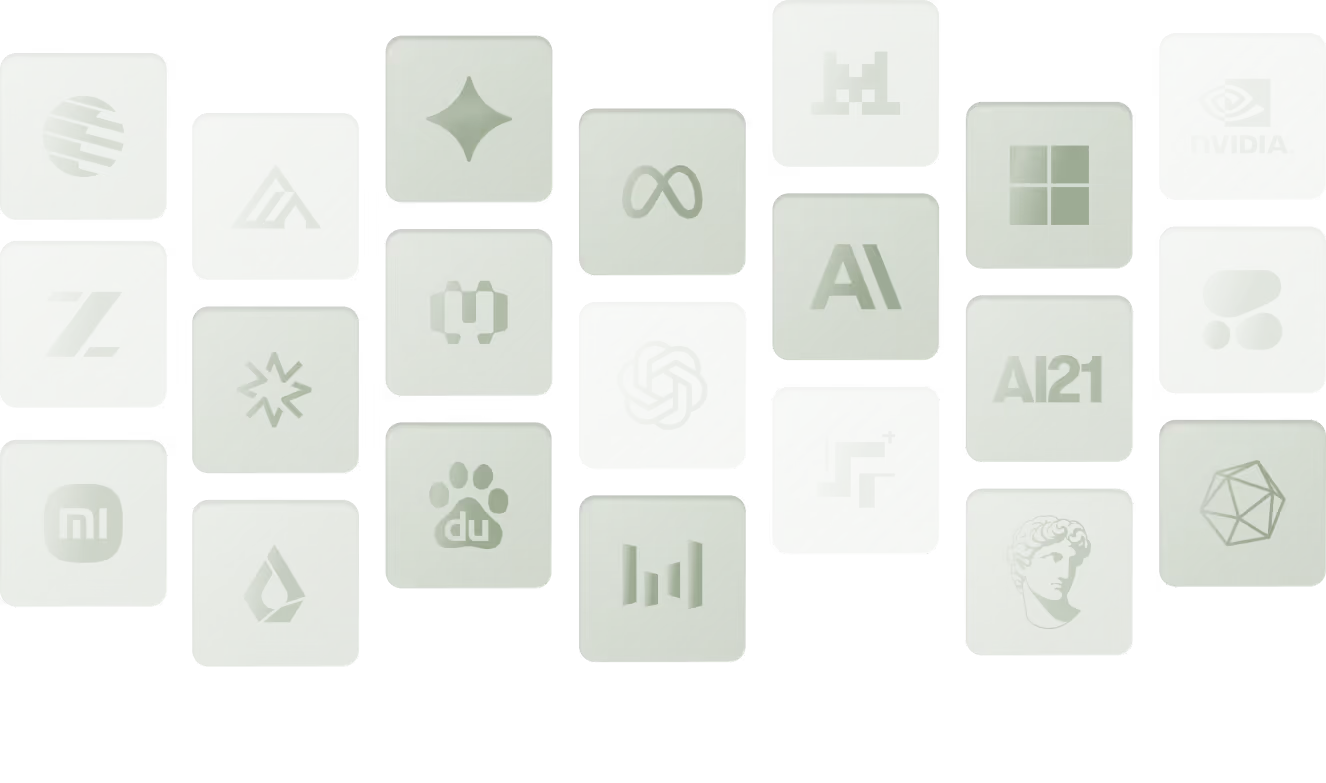

Connect to every LLM through one API

Use top models without managing multiple vendors.

Centralize integrations, credentials, and configuration.

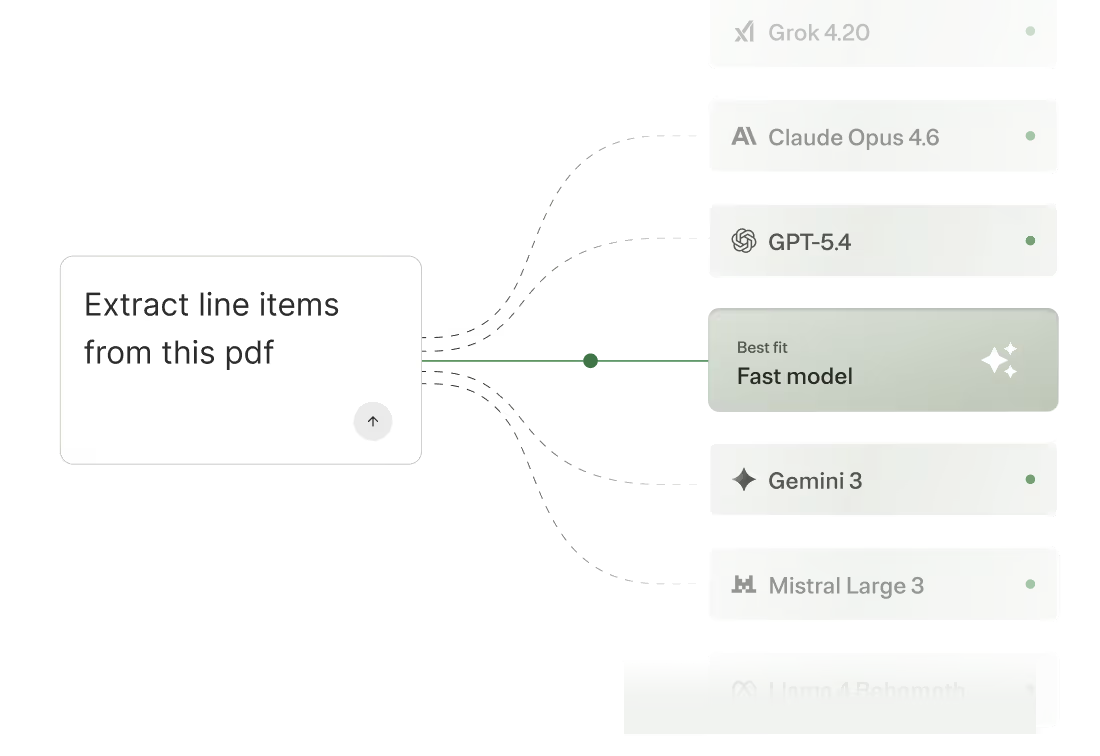

Use the best model for the job

Route requests based on real-time cost, latency, and quality. Manage models in the dashboard without touching code.

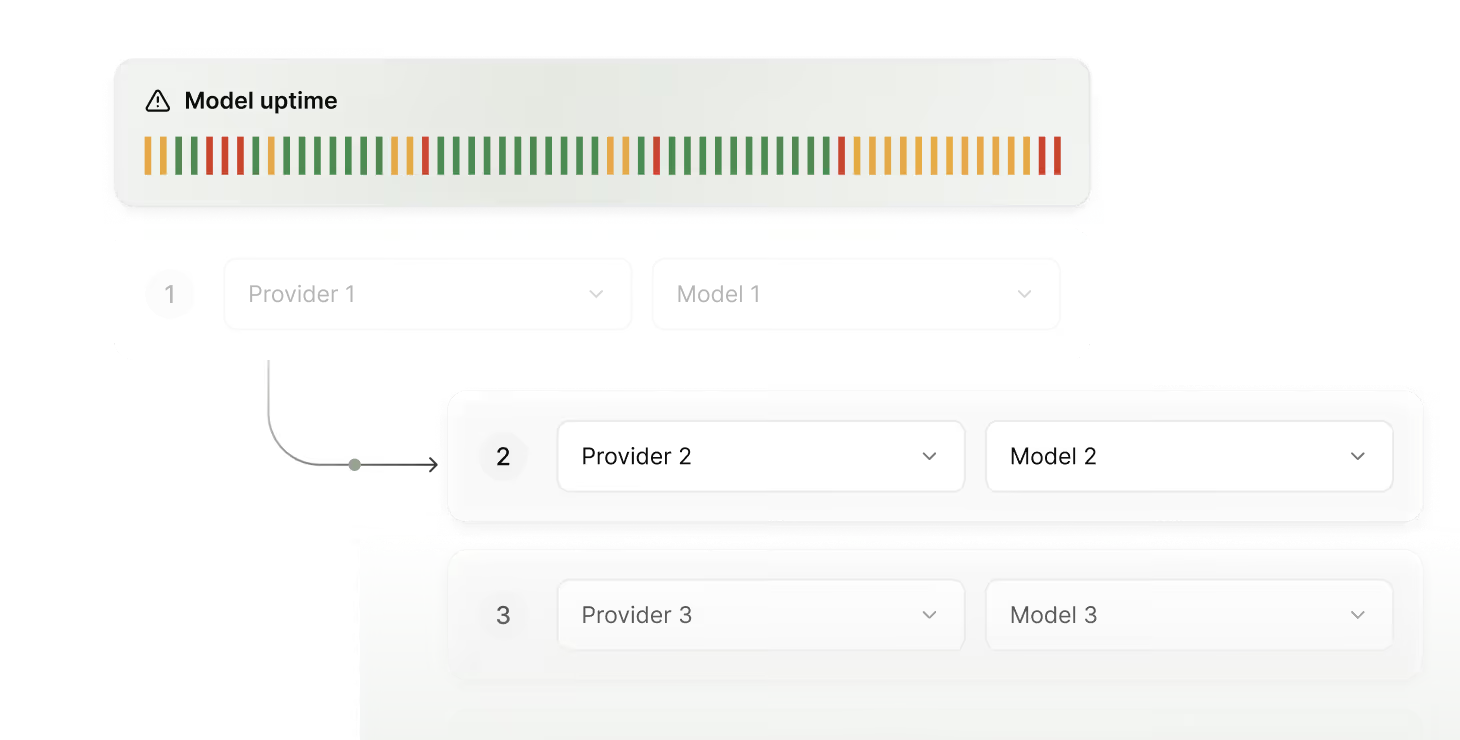

Build resilient AI products

Keep your AI product running without interruptions.

Reroute traffic instantly when providers fail.

Control token costs as AI usage ramps

Keep AI economically viable as you scale. Compress requests, optimize routing, and enforce budgets to stay efficient while increasing usage.

Eliminate surprise AI bills

Apply spend limits by team, project, or customer tier.

Get alerts before you hit thresholds. Hard stops help slam the brakes; soft stops give you oversight.

Stop spending on bloat and unnecessary requests

Lower costs with context compression and response caching. No manual optimization required, it’s handled at the infrastructure layer.

Understand where AI spend goes and why

See all spend through a unified billing experience. Get attribution so Finance has answers before they ask questions.

Stay compliant and get visibility into every AI interaction

Set policies once and enforce them everywhere. Every request is automatically logged and searchable from day one. So when the review comes, you're ready.

Observe everything your AI is doing

Access request-level logs for visibility into every routing decision, cost, and policy enforcement so you can continuously optimize how your AI runs.

Your AI roadmap is ambitious. Your infrastructure should match.

Free to start. No sales call required.

FAQ

Gateway charges a small margin on top of underlying provider costs. One consolidated invoice instead of managing billing across multiple providers. No platform fees, no per-seat charges.

All major providers, including OpenAI, Anthropic, Google (Gemini), Grok (X), Mistral, and Cohere. New providers and models are added regularly and available through the same API.

Gateway provides deterministic and intelligent routing to optimize for cost, performance, latency, or the best model for each task. When a provider degrades, traffic automatically shifts to maintain reliability.

Most teams make their first call in minutes. If you're already calling LLM APIs, it's a single endpoint swap. Routing, cost controls, and security can be configured from the dashboard after you're connected.

Yes. Set Gateway as your base URL. Existing code works as-is, no new SDK required. A native SDK is available for deeper integration.

Yes. Add your API keys in the Merge dashboard and we'll route requests to those providers automatically. BYOK usage is billed at $0.05 per million tokens.