Table of contents

3 LiteLLM alternatives to prioritize evaluating in 2026

.avif)

As you look to build cost-effective and reliable AI products, you’ll likely consider an LLM routing solution like LiteLLM.

Before deciding whether to use it, it’s worth comparing it to its top alternative solutions: Merge Gateway, OpenRouter, and Portkey. We’ll help you do just that below.

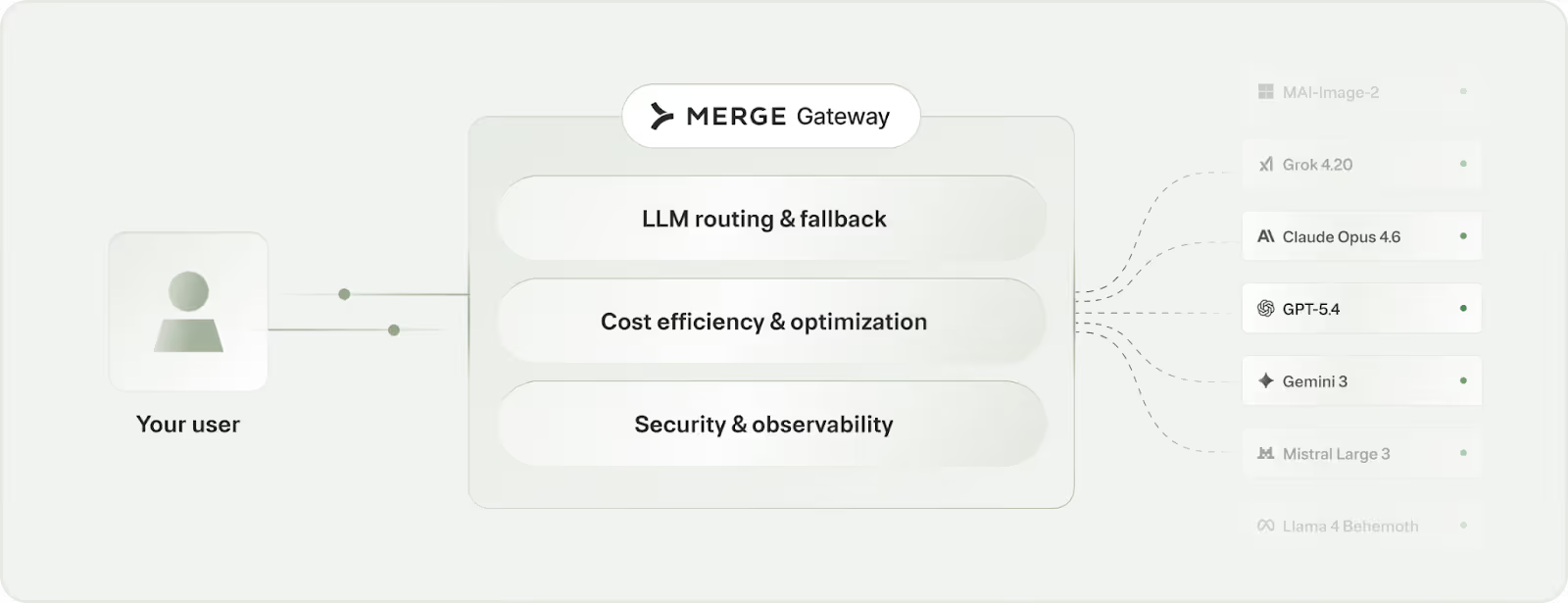

Merge Gateway

Merge Gateway offers a single API and control plane for running AI in production. It sits between your application and LLM providers, giving you one layer to manage model access, reliability, cost, governance, and billing across providers.

Top features

- Unified API across models: Integrate once and call major LLM providers through a consistent interface, without SDK sprawl or provider lock-in

- Routing and automatic fallback: Policy-based and intelligent routing with failover so AI features stay up through provider issues and rate limits

- Cost governance and optimization: Set budget and spend controls by project, team, or customer tier, plus LLM cost optimization features like context compression and semantic caching

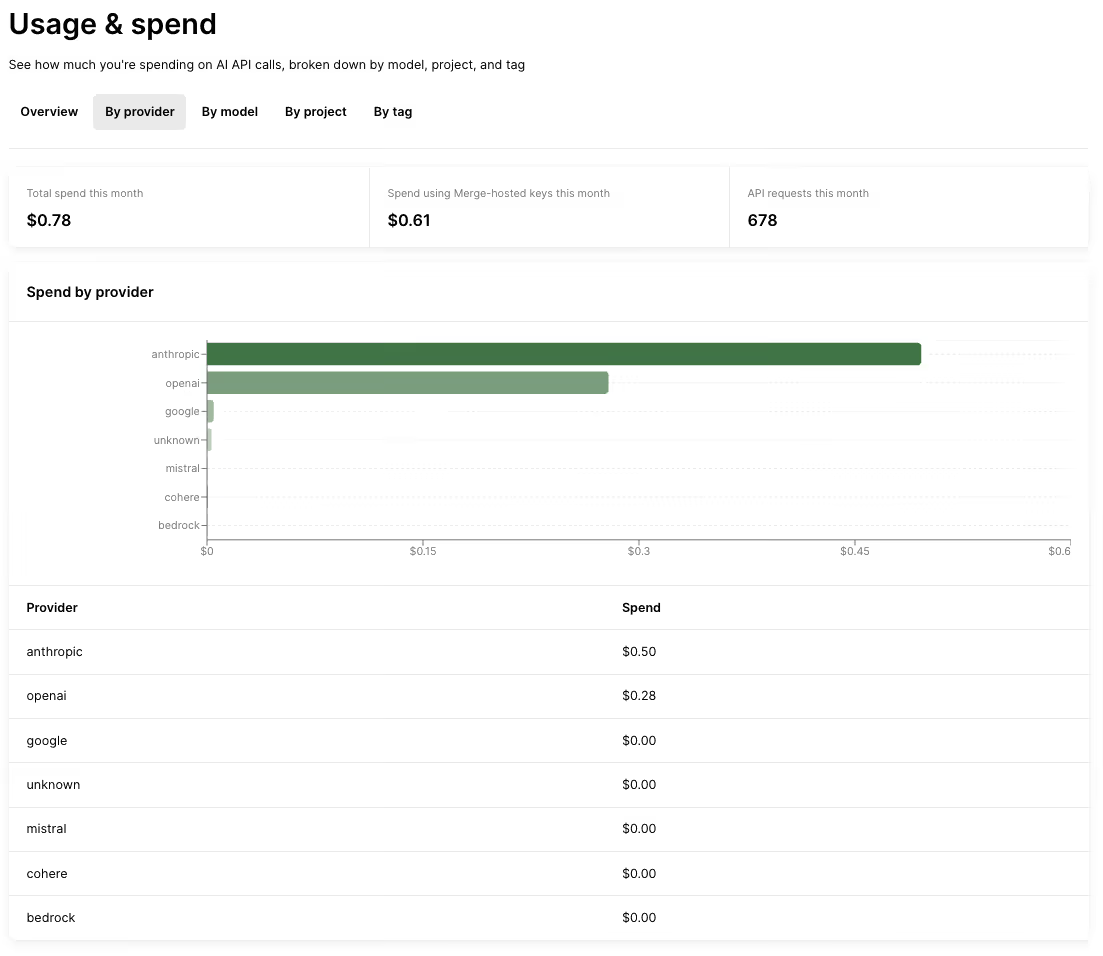

- Holistic and granular cost analysis: Track your spend across LLM providers, projects, custom tags, and more over time

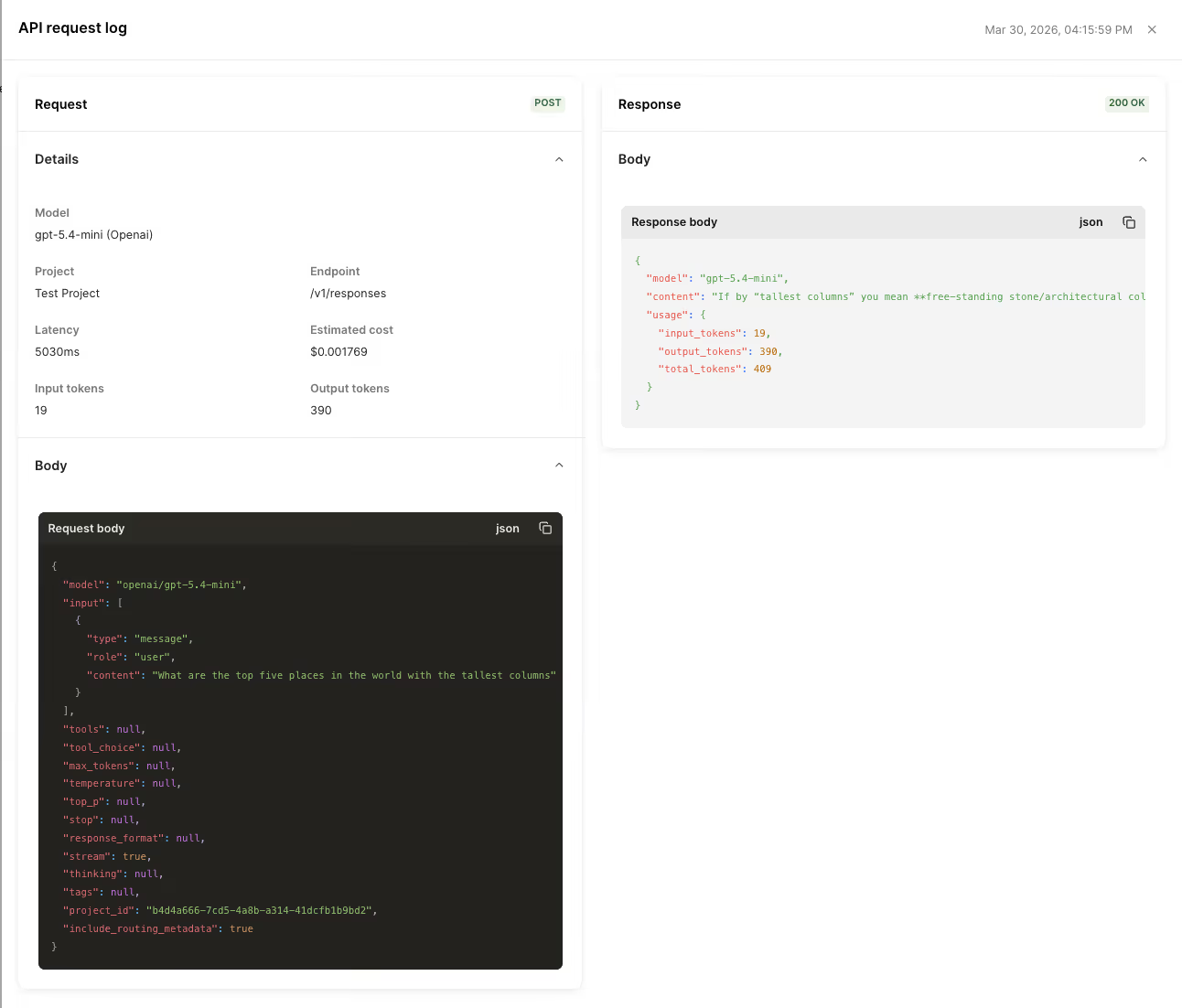

- Observability: Access request-level logs with details like routing decisions, cost, latency, and governance outcomes for debugging and audits

When to use Merge Gateway over LiteLLM

- You want a fully managed control plane: With LiteLLM, the gateway often becomes a tier-0 internal service you need to deploy, scale, monitor, and upgrade, plus own incident response and provider-change breakages. Merge Gateway avoids that operational burden by providing the gateway layer as a managed, production-ready system

- You need unified billing and cost attribution across providers. Unlike Merge Gateway, LiteLLM forces you to reconcile separate provider invoices and stitch together usage data, which makes it hard to answer basic questions like “what did this feature or customer tier cost us this week?” at a glance

- Reliability is a production requirement: LiteLLM makes you operate the gateway as a tier-0 service. This means any outage, misconfiguration, or scaling issue in your LiteLLM deployment can take down or degrade every AI feature. Since Merge Gateway is a fully managed control plane, you generally do not have to run the gateway infrastructure yourself

- You need audit-ready observability and governance controls. Since LiteLLM doesn’t support this functionality out of the box, your engineers will need to build and maintain the logging, access controls, retention, and policy enforcement around the gateway, which can be incredibly complex and time intensive

{{this-blog-only-cta}}

OpenRouter

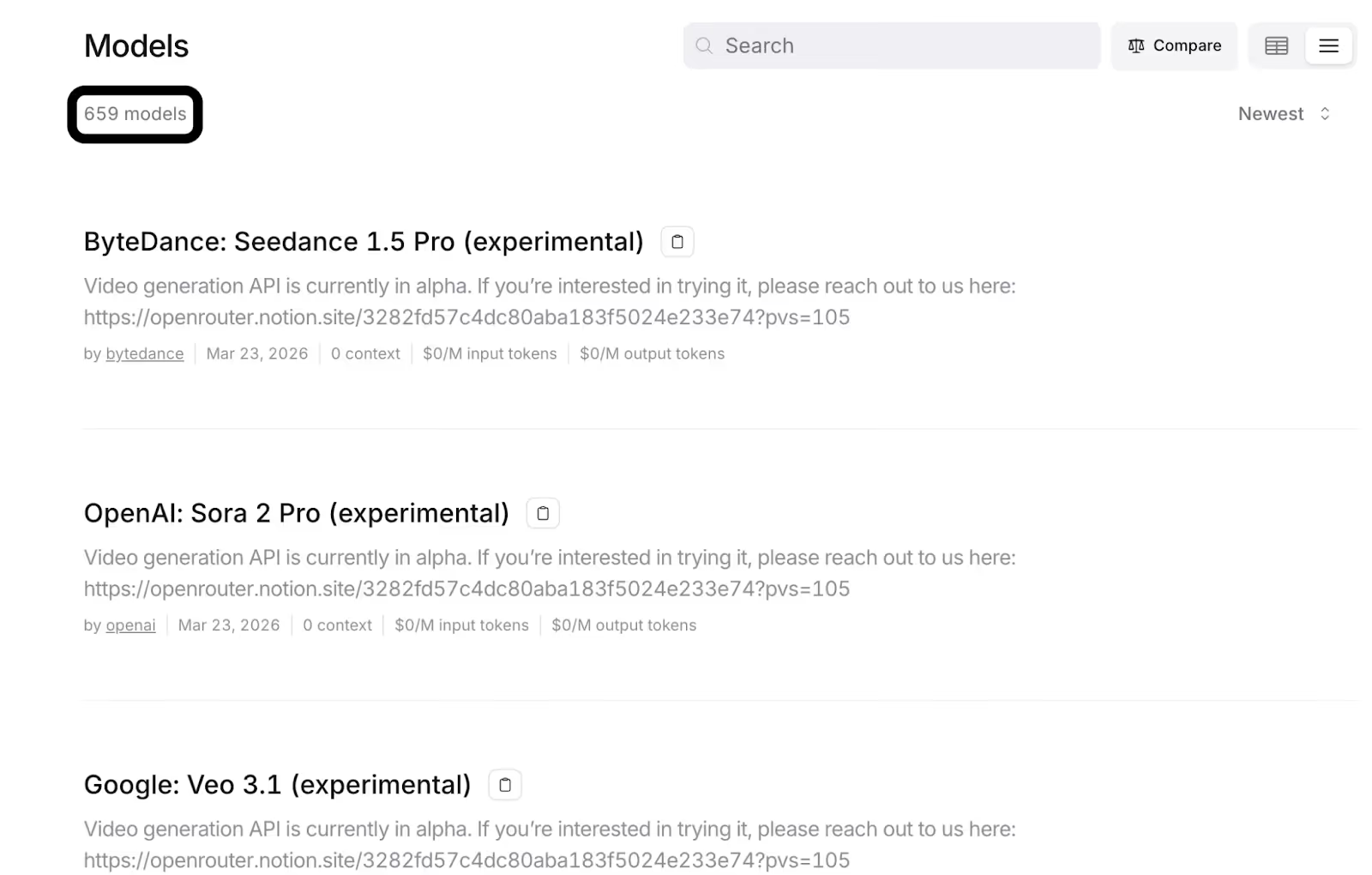

OpenRouter is a unified, OpenAI-compatible API that gives you access to a large catalog of models across providers, with automatic provider selection and failover.

Top features

- Model routing: Access to a broad catalog of models (600+) as well as configurable provider ordering

- Caching support: Cache prompts and responses and implement time limits on when prompts or responses stay valid

- Unified billing and usage tracking: Use credit-based billing and see how your costs break down by model, time period, API key, project, or request

When to choose OpenRouter over LiteLLM

- You want OpenRouter to handle provider selection and failover for you. This reduces engineering overhead and ensures your AI features are running when a provider has downtime or errors

- You need unified billing. Unlike LiteLLM, which forces you to pay providers directly, OpenRouter lets you fund a single credit balance for every provider

- You’re looking for broad, out-of-the-box model access via one gateway. LiteLLM generally requires you to bring your own provider keys and configure each provider, while OpenRouter gives you a single endpoint with a large, pre-integrated model catalog you can use immediately

- You want built-in enterprise controls. OpenRouter offers significantly more security, support, and access management features, including SSO, audit logs, and SLAs

Related: The top alternatives to OpenRouter in 2026

Portkey

Portkey is an AI gateway that sits between your app and multiple LLM providers, giving you one integration point to route requests, enforce policies, and observe production traffic while you bring your own provider API keys.

Top features

- Multi-provider routing and load balancing: You can put Portkey in front of multiple LLM backends (e.g., OpenAI, Anthropic, Bedrock, and a private endpoint), and Portkey decides where each request should go based on rules you set

- Reliability controls: Portkey can automatically retry failed requests and switch to a backup provider if needed

- Caching support: Portkey lets you cache model responses so repeated (or similar) prompts can be served without calling the provider again. You can use this to reduce latency and token spend, as well as to control when caching is enabled and how long entries stay valid

Related: A guide to Portkey alternatives

When to choose Portkey over LiteLLM

- You want stronger “ops and governance” primitives out of the box. Unlike LiteLLM, Portkey offers built-in controls like budgets, rate limits, and detailed request logs/traces so you can enforce guardrails and debug issues

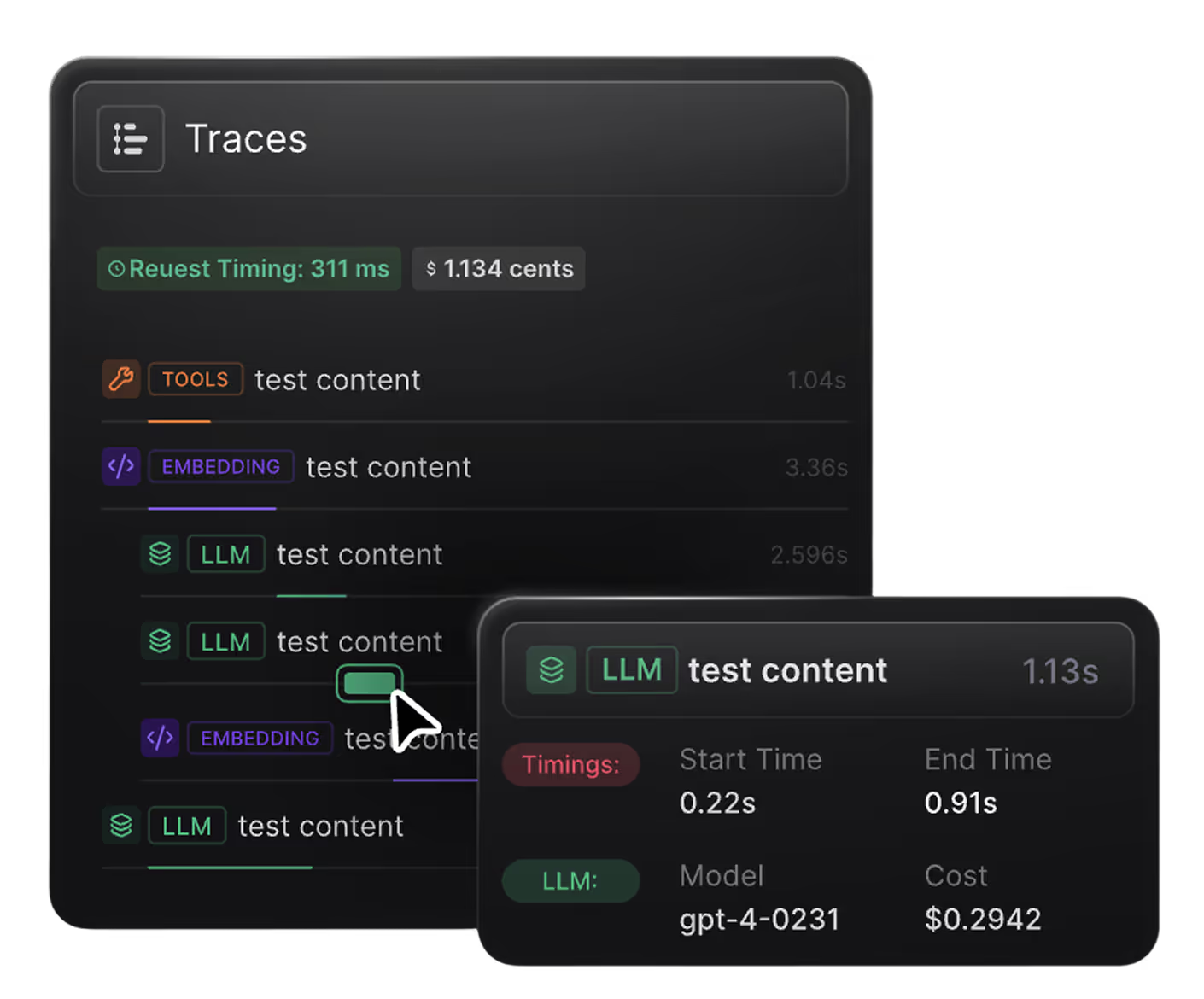

- You need deeper observability and tracing. Portkey offers request-level visibility into what happened in production, like which model/provider handled the call, end-to-end latency, token usage and cost, and the exact error or retry path. This lets you quickly pinpoint whether an issue came from your prompt, the provider, or your own application

- You’re looking for more enterprise controls. This includes features like RBAC, audit logs, and additional deployment options to meet security and compliance requirements as usage scales

- You want a gateway that’s more “production platform” than “proxy.” A production platform like Portkey’s includes built-in monitoring, guardrails, and reliability features, rather than a thin forwarding layer your team has to harden and operate

{{this-blog-only-cta}}

.avif)

.avif)

.png)

.png)