Table of contents

Just for you

OpenRouter vs LiteLLM: when to use one over the other

As you build LLM-powered features, you’ll need to auto-route requests to the right model consistently.

Otherwise, you can face inconsistent output quality, higher costs, and slower performance as requests drift to the wrong models or providers. Worse, you risk more downtime without clean failover, and security and compliance exposure if sensitive traffic reaches unapproved endpoints.

Fortunately, you can use LLM optimization solutions like LiteLLM and OpenRouter to manage your spend and performance.

We’ll compare the two directly so you can decide which is the better fit for your product. We’ll also introduce a 3rd option, Merge Gateway, and highlight when it’s the best choice.

But at a high level, here’s how they stack up:

LiteLLM overview

LiteLLM is an OpenAI-compatible SDK and proxy that lets you call many LLM providers through one consistent interface, typically as gateway infrastructure you run (self-hosted or managed).

It’s commonly used to standardize requests and centralize controls while keeping flexibility over providers and deployments.

Strengths

- High control: You can tune LLM routing, policies, and deployment topology to match your system requirements

- Works well for “platform teams”: It supports multi-tenancy, usage tracking, budgets, and rate limits for internal consumers

- Broad provider coverage and OpenAI-compatibility: This makes it easy to standardize app code while swapping backends

Weaknesses

- Operational burden: You’re responsible for running and scaling the gateway as a tier-0 dependency, and outages can impact all AI features

- Requires more setup: Bringing providers online often means configuring provider keys, models, routing, and infra details yourself

- Enterprise readiness depends on deployment: Your posture depends on how you deploy and harden it.And implementing SSO, audit logs, etc. may require enterprise licensing and additional work

Related: The top alternatives to LiteLLM

OpenRouter overview

OpenRouter is a hosted, OpenAI-compatible API that gives you access to a large cross-provider model catalog, with routing and failover handled for you.

It’s often used when you want broad model access quickly without operating gateway infrastructure yourself.

Strengths

- Low ops overhead: you don’t need to host and maintain an LLM gateway layer yourself

- Fastest path to multi-model breadth: large, pre-integrated catalog (600+ models) via one endpoint

- Simplified spend management: Unifies billing via credits plus built-in usage tracking and reporting

Weaknesses

- Less infrastructure control than self-hosted gateways: This includes the deployment environment, network boundaries, and bespoke governance in the request path

- Platform dependency: You’ll rely on OpenRouter for routing behavior, uptime, rate limits, and quota mechanics

- Harder to meet strict internal requirements: This is especially true if you need maximum customization of the gateway layer, versus running/operating the gateway yourself

Related: OpenRouter’s top competitors in 2026

LiteLLM vs OpenRouter

Given all this context on the two solutions, it can be hard to determine when you should use one over the other.

Here’s guidance on making your decision:

- When to use OpenRouter over LiteLLM: You want out-of-the-box model access, routing and failover, and unified billing with minimal engineering and operational overhead

- When to use LiteLLM over OpenRouter: You want to own and customize the gateway layer (including routing and governance) and potentially keep traffic within your own environment to meet stricter control or compliance needs

Introducing Merge Gateway: The best alternative to LiteLLM and OpenRouter

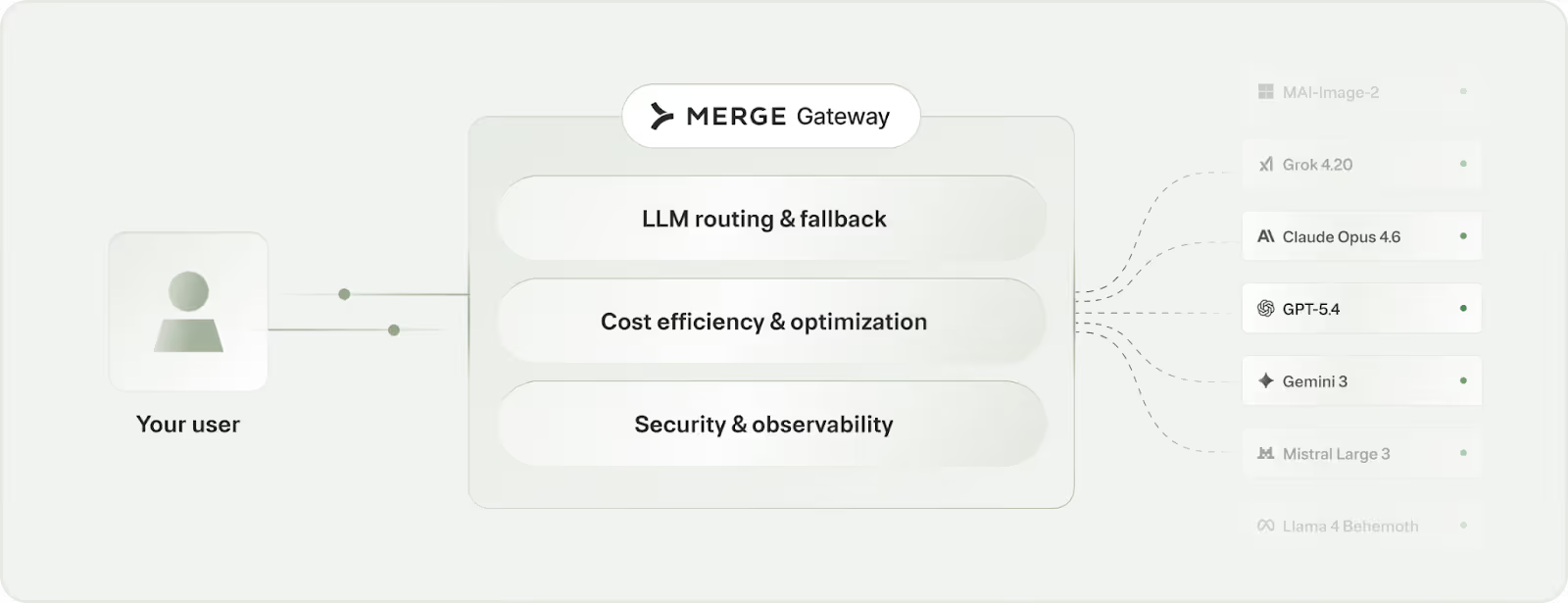

Merge Gateway is a unified API and control plane for building, scaling, and optimizing AI-powered products across LLM providers.

It gives teams one layer for model access, routing, cost governance, security controls, and unified billing so you can ship faster without sacrificing reliability or margins.

Merge Gateway offers the best of LiteLLM and OpenRouter and allows you to avoid their drawbacks by providing:

- Managed control plane: You get the “don’t run it yourself” simplicity you’d want over LiteLLM, while still getting a full control plane (i.e., not just multi-model access) versus a pure hosted router

- Cost governance and optimization in the request path: Access real-time budget enforcement plus optimization levers like context compression and semantic response caching, without requiring application-level changes (beyond what you typically get from either a proxy you operate or a hosted catalog)

- Enterprise security and compliance controls in-line: You’ll have built-in DLP-style controls, alerts, and policy-aware compliance checks against company documentation, rather than needing to bolt governance/security on around a gateway

- Unified billing and cost attribution: You’ll get one invoice and a single system of record for spend, with full attribution across providers/models/projects/teams, instead of reconciling provider-by-provider billing (and stitching data together) as usage scales

Get started with Merge Gateway today by signing up for a free account!

.png)

.png)