How to optimize your LLM costs (5 best practices)

.avif)

As your LLM-powered products gain traction, costs can rise quickly.

While some increase is inevitable, how steep that growth becomes is largely within your control.

We’ll break down practical techniques to optimize your LLM spend. But first, let’s align on what LLM cost optimization actually means and why it matters.

What is LLM cost optimization?

It’s the set of strategies you use to reduce your product’s LLM spend without sacrificing performance. This typically includes routing requests to the most cost-effective models, setting budgets for individual projects, and receiving alerts when costs rise unexpectedly.

Why LLM cost optimization matters

Here are just a few reasons to keep in mind:

- Protects gross margins: LLM costs are often usage-based, so reducing tokens and calls directly improves unit economics

- Enables predictable scaling: Without optimization, costs can rise non-linearly as traffic, context size, and multi-step agent workflows grow

- Improves product reliability: Lower spend usually correlates with fewer requests, smaller prompts, and fewer retries, which reduces latency and timeout risk

- Lets you price competitively: If inference costs are controlled, you can offer stronger pricing or more generous usage limits without losing money

- Prevents “runaway” features: Some features (long context, tool-calling loops, streaming, high-quality models by default, etc.) can silently become cost sinks unless they’re constrained

- Frees budget for quality where it matters: Savings can be reinvested into better models for high-value paths, evals, safety, or more robust infrastructure

- Reduces operational risk: Lower cost variance makes forecasting easier and decreases the chance of surprise bills or emergency throttling

LLM cost optimization strategies

Here are a few core strategies worth putting into practice.

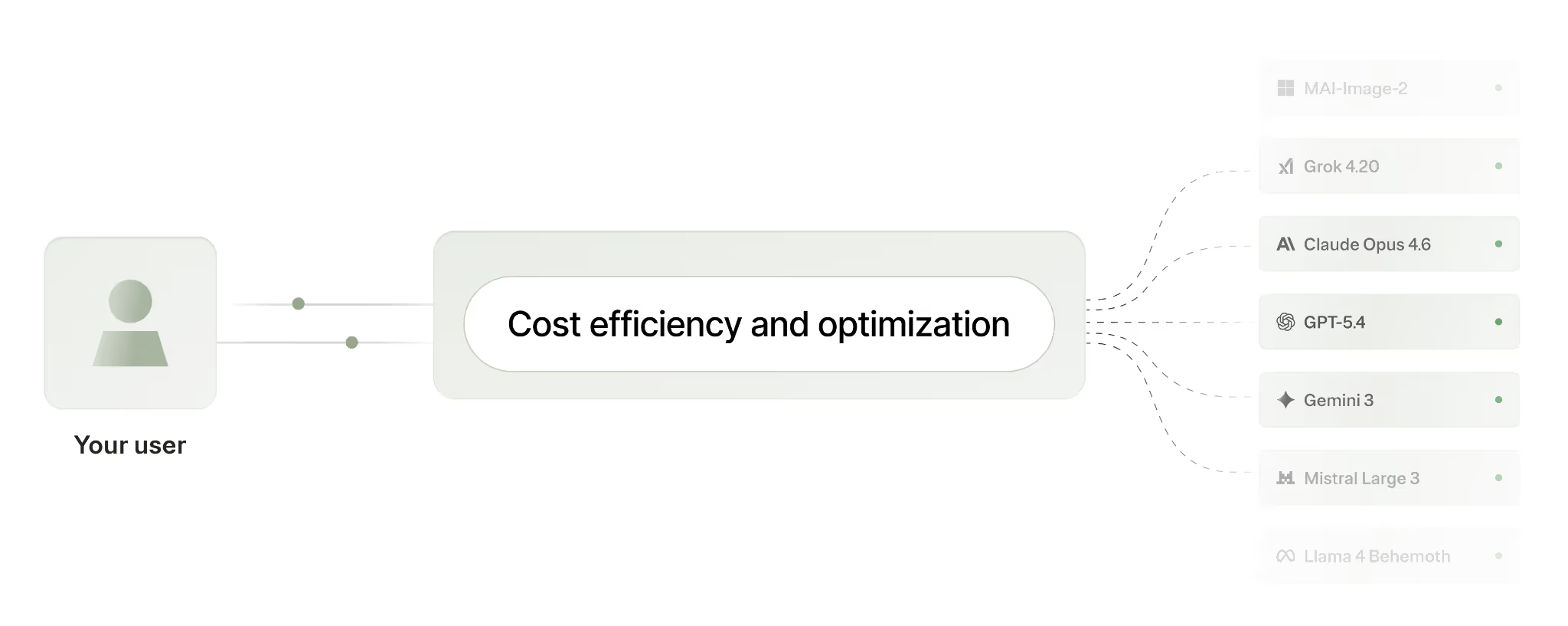

Implement model routing policies that prioritize cost savings

Your product will likely receive requests that vary widely in complexity.

Instead of just letting 1 or 2 relatively expensive models handle every request, you can set policies that define when models handle different requests.

For example, you can classify the scenarios that call for a more basic model (e.g., GPT-5.3 mini), along with scenarios that call for a more advanced model (e.g., GPT-5.3).

You can even use a 3rd-party LLM cost optimization platform, like Merge Gateway, to handle the routing logic on your behalf.

Related: A guide on OpenRouter alternatives

Validate model costs before rolling out routing logic to production

Before implementing the previous best practice, you should also preview the actual costs of using one model over another for a given use case.

To do this, you can simply use a simulator in a 3rd-party solution like Merge Gateway. You’d keep everything consistent between the two models (e.g., the user message and system prompt) and then review the specific costs from each.

Obviously, cost is just one factor. You’ll also need to compare latency and response quality during your side-by-side comparison.

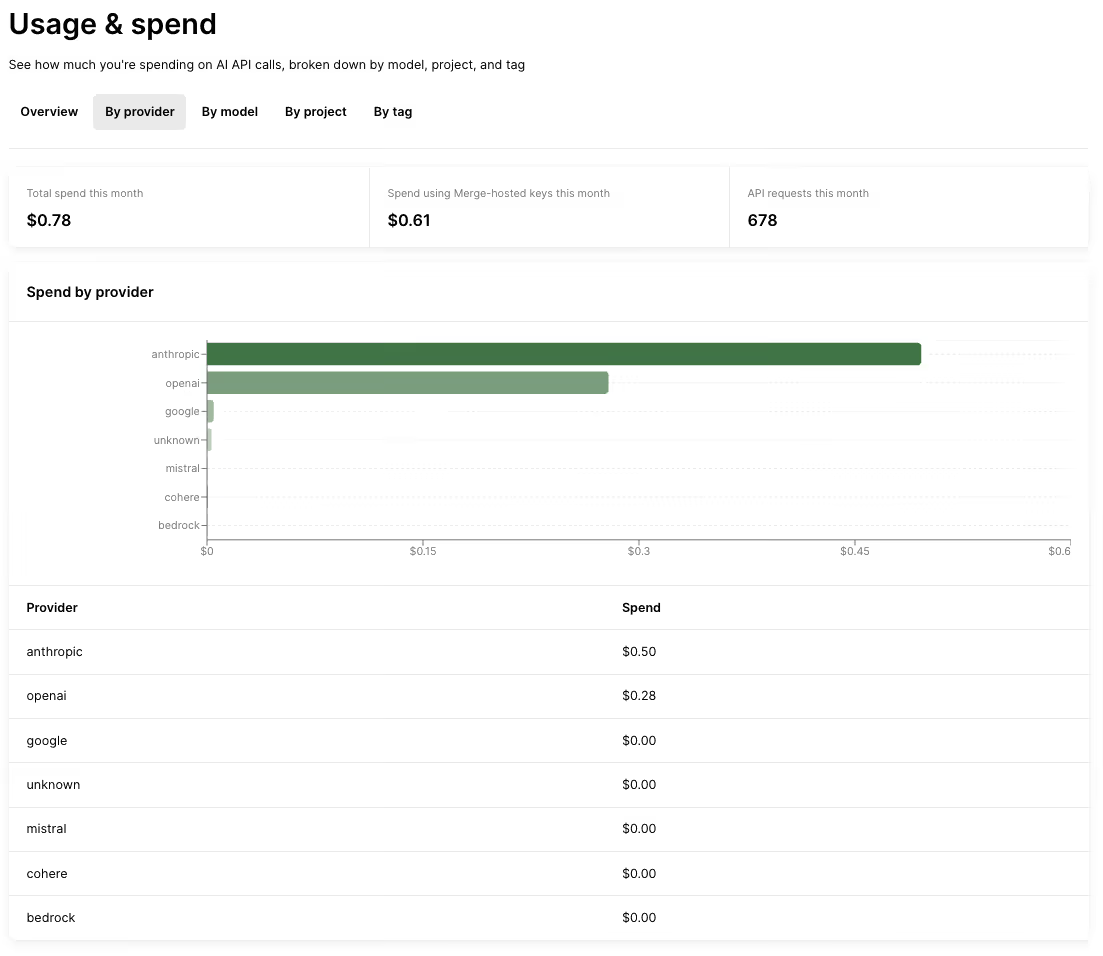

Analyze spend to identify issues and areas of improvement

Once your product starts using LLMs, you’ll need to consistently revisit your costs across several dimensions, such as models, projects (e.g., one of your agents), and customers.

That way, you can right-size the model choice for a given project, set stricter routing rules for expensive models, and catch cost regressions early before they impact margins or force you to throttle usage.

These insights can even help you improve your product’s pricing plans. For example, if you see high demand from a small set of power users, you can set fair usage thresholds (e.g., allotting a certain number of tokens per month) and move heavy usage into an enterprise plan with committed spend.

Use context compression on every request

Context compression cuts down on the text you send to an AI model by removing the irrelevant or repetitive parts.

This reduces the number of tokens you’re billed for on each request, which is especially helpful when prompts include long chat histories, large retrieved context (i.e., RAG), or bulky tool outputs.

It also reduces costs in multi-step scenarios: if a request triggers retries or fallback routing, compressing the context means you pay for fewer tokens on those extra calls too.

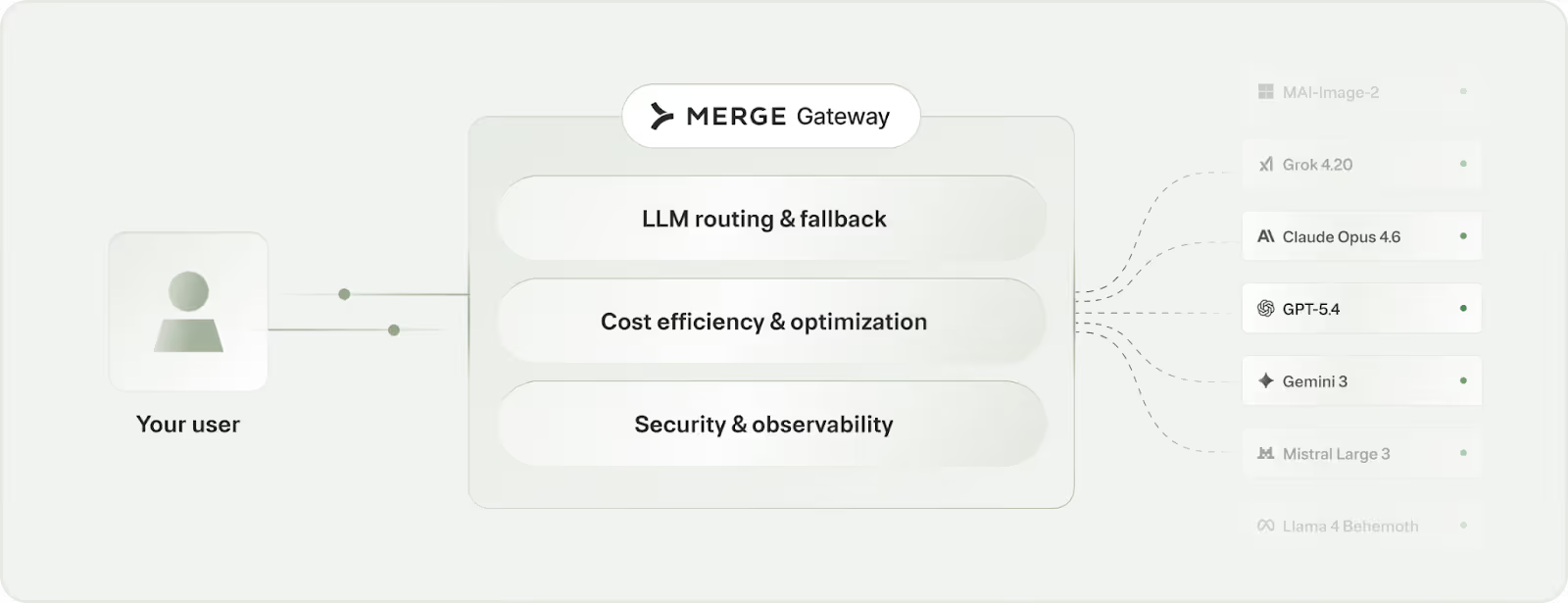

Leverage a 3rd-party platform

All of the strategies we’ve covered don’t need to be built in-house. In fact, they shouldn’t be.

Implementing each can take your engineering team hundreds of hours and countless more time to maintain.

Take LLM routing as an example.

In practice, routing decisions depend on multiple factors: feature criticality, customer tier and SLAs, per-tenant budgets, prompt size, etc. As these rules spread across agents and workflows, what started as a few if/else statements became a centralized system with defined schemas, versioning, and clear ownership.

Routing also needs to handle failures gracefully. That includes provider outages or degradation, low-quality outputs, cost spikes, and fallback paths that preserve the user experience.

And finally, every policy change needs to be rolled out and measured carefully, since routing decisions have direct trade offs with cost, quality, and latency.

Fortunately, your team doesn’t have to worry about building model routing functionality in your product, or any other features that control LLM spend.

You can simply use Merge Gateway, which offers a single API for every LLM and centralizes routing and fallback policies, cost visibility and controls, and governance.

You can start controlling your LLM spend with Merge Gateway by creating a free account!

.avif)

.png)

.png)