3 Portkey alternatives to prioritize in 2026

.avif)

If you’re looking for an LLM routing solution to help manage your AI product’s costs, you’ll likely consider Portkey.

As you decide whether to use the self-hosted, open-source LLM gateway, you’ll need to compare it to its top alternatives: Merge Gateway, OpenRouter, and LiteLLM.

This article helps you do just that.

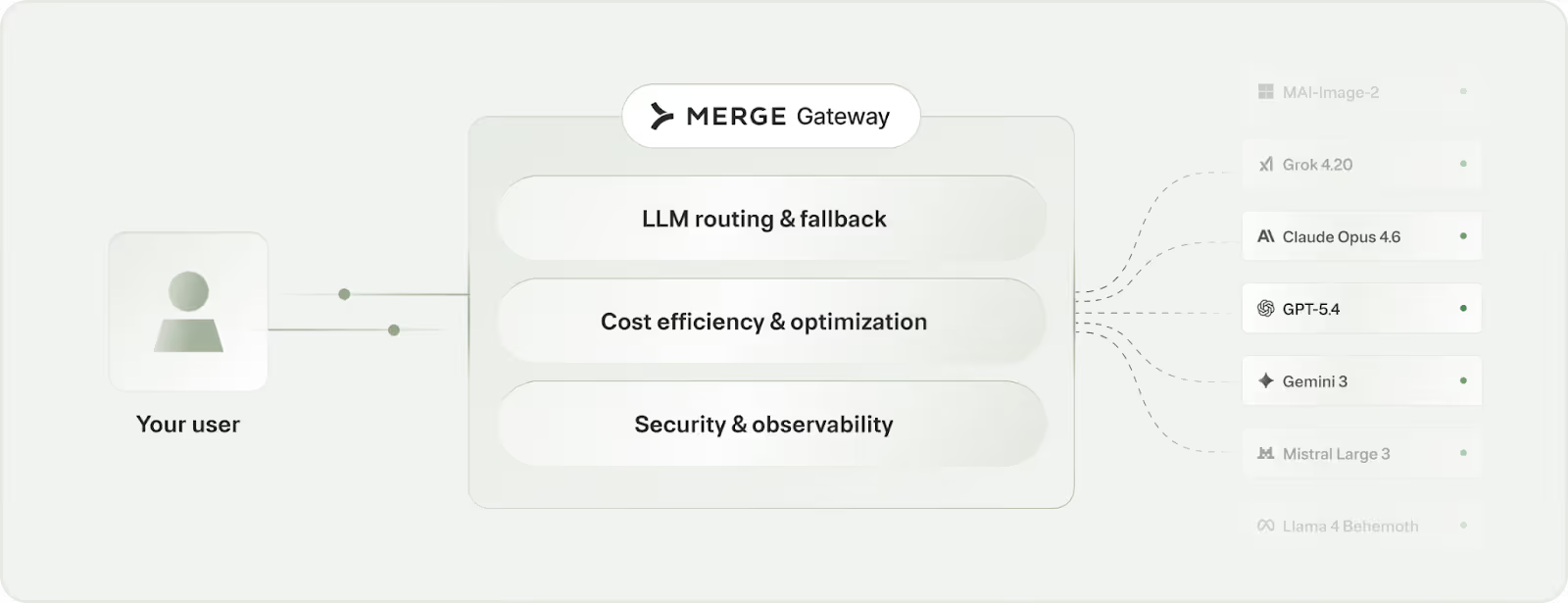

Merge Gateway

Merge Gateway gives teams access to hundreds of LLMs through a single API, with built-in routing, cost governance, security controls, and unified billing.

Top features

- Unified API across models: This allows teams to integrate once and avoid provider lock-in and SDK sprawl

- Routing and automatic fallback: Supports deterministic and policy-based routing (plus adaptive intelligent routing), and automatically fall back to the next-best model or provider when errors or downtime occurs

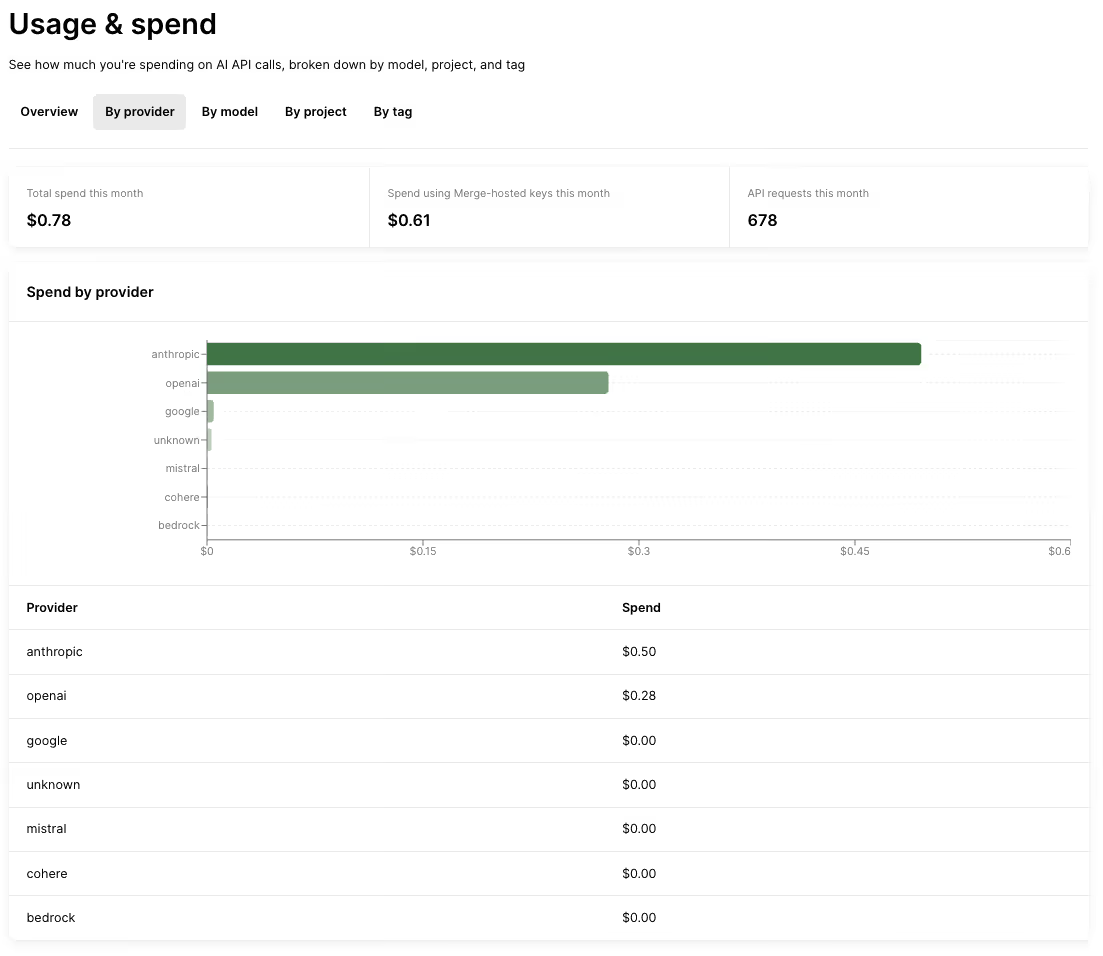

- Cost governance: Enforce real-time budgets and usage controls (e.g., by project) and continuously reduce LLM spend with optimizations like context compression and semantic response caching

- Unified spend visibility: Access full cost attribution across providers, models, projects, and teams

When to choose Merge Gateway over Portkey

- You want unified billing. Portkey still leaves you with fragmented provider accounts and invoices, while Merge Gateway consolidates spend into a single billing relationship so you can manage multi-provider usage in one place

- You need stronger cost governance. Merge Gateway is positioned to enforce budgets and optimize spend (e.g., spend limit for a project); Portkey offers less functionality around cost enforcement and margin protection

- You’re looking for a solution with a comprehensive security posture. Merge Gateway adds security guardrails (e.g., DLP-style controls) alongside routing and governance, while Portkey is focused on the routing and observability layer

{{this-blog-only-cta}}

OpenRouter

OpenRouter is a unified LLM API that gives developers one endpoint to access many models and providers, with built-in routing and fallback behavior. It also offers unified billing via credits, so teams can use multiple providers without managing separate provider invoices.

Top features

- Unified multi-model API: Integrate once and then switch between models and providers by changing a single parameter, instead of maintaining separate provider-specific SDKs and request formats

- Automated routing and fallbacks: Automatically fails over to the next-best provider if the current one errors or is down, and its fallback behavior is configurable so teams can disable it or constrain it to specific provider

- Caching support: Supports prompt and response caching to cut costs and latency on repeat calls, and it’ll try to reuse cached content by routing to the same provider when possible

- Unified billing via OpenRouter credits: Deposit credits with OpenRouter and they handle payments to the underlying model providers on your behalf. This lets you avoid juggling separate provider billing

When to choose OpenRouter over Portkey

- You want unified billing. OpenRouter unifies billing, while Portkey is positioned as a BYO-keys gateway where you still pay providers directly (so it centralizes usage, but not provider billing)

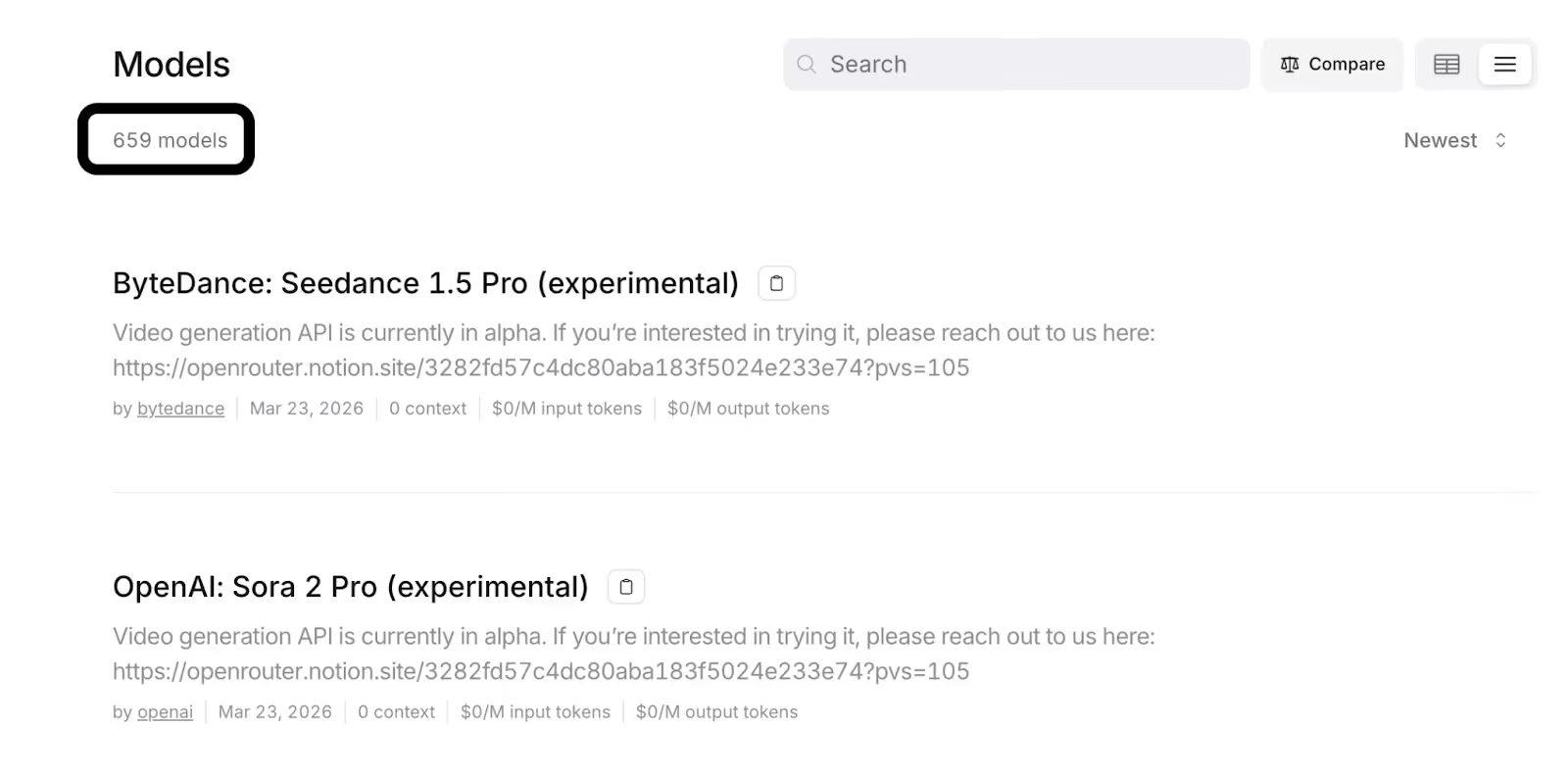

- You need a broad model catalog via a single managed endpoint. OpenRouter offers a large cross-provider catalog (600+ models across 60+ providers), while Portkey is positioned as a BYO-keys gateway where expanding coverage can mean more provider-by-provider setup

- You want credit-based quota controls. OpenRouter lets orgs set spend limits using a credit-based system (e.g., per-API-key budgets tied to an OpenRouter credit balance); while Portkey is positioned as a BYO-keys gateway that can enforce budgets but without a unified credits-based quota system for underlying model spend

Related: OpenRouter’s top competitors in 2026

LiteLLM

LiteLLM is positioned as an OpenAI-compatible interface you can use to call many LLM providers through a consistent API, including support for local deployments.

It’s typically used as infrastructure you run (or otherwise operate) to standardize requests and centralize controls across providers.

Top features

- Unified API: Access a single OpenAI-format interface to call 100+ LLMs (including OpenAI, Azure, Anthropic, etc.)

- Retries and fallback logic: Built-in retry and fallback behavior across multiple deployments for resilience (although configuration is required here)

- Extensive caching options: This includes exact-match response caching and semantic caching, which configurable per project)

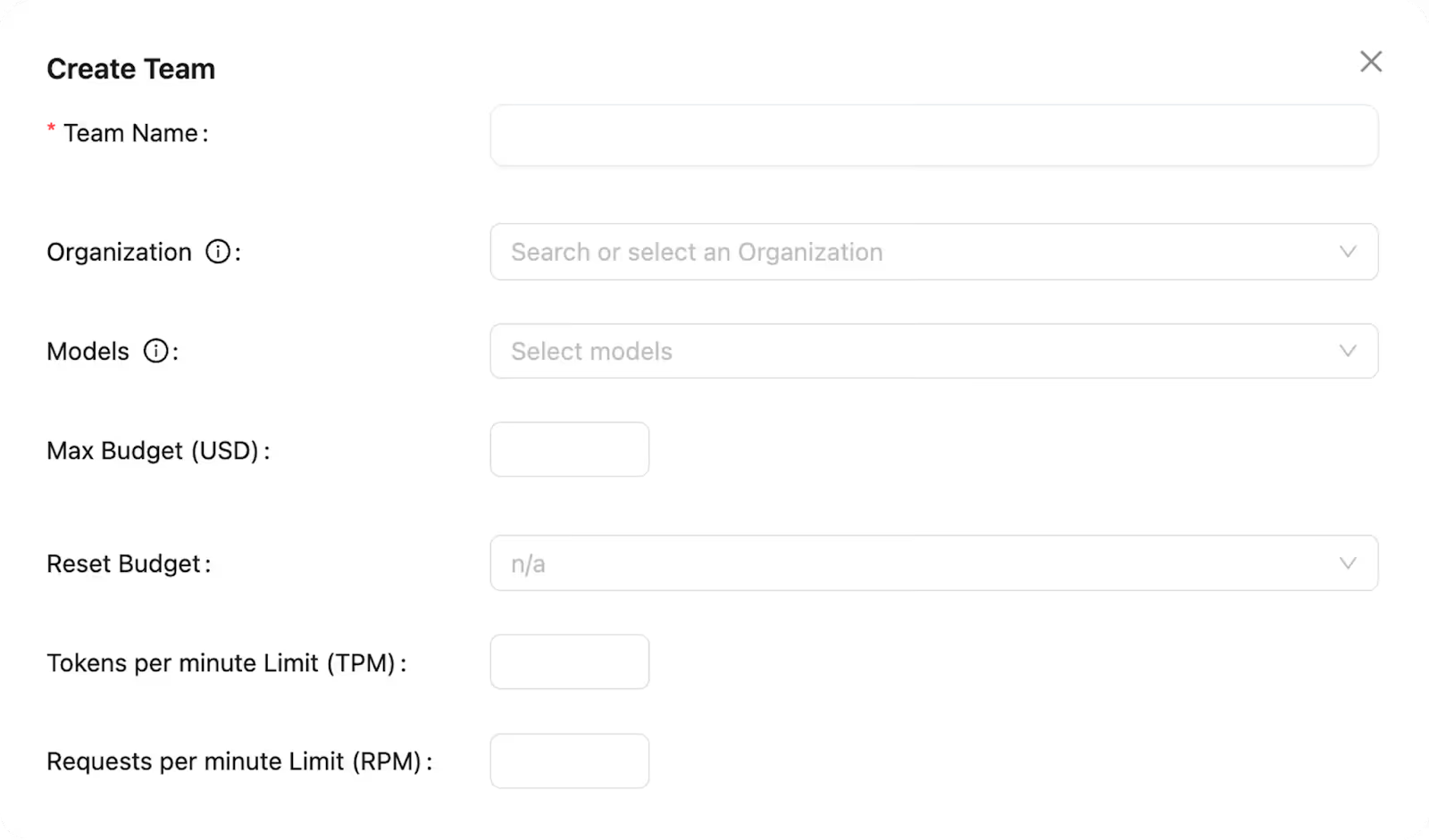

- Governance controls: Set RPM/TPM limits on per-user or per-team “virtual API keys” to prevent abuse and smooth traffic spikes. You can also enforce granular spend budgets (e.g., per user) with periodic resets, and block usage when a budget is reached

Related: The top competitors to LiteLLM in 2026

When to choose LiteLLM over Portkey

- You need tighter control over deployments. LiteLLM can be deployed as a self-hosted proxy (private cloud or on-prem), giving you more control over where it runs and how traffic is handled than Portkey

- You want more granular budget enforcement. LiteLLM can go beyond Portkey’s “budget limits” by letting you enforce spend budgets at multiple scopes (global, per team, or per user), with periodic resets, and the ability to block usage when a budget is hit

- Caching is a priority: LiteLLM offers more extensive caching options, including exact-match response caching and semantic caching via common backends (e.g., Redis or Qdrant)

{{this-blog-only-cta}}

.avif)

.png)

.png)