Introducing Merge Gateway: the control plane for production AI

.avif)

When we started Merge, we were obsessed with a simple idea: developers should be able to work on their core products and focus on their unique advantages.

This led us to build Merge Unified, which turned fragmented SaaS APIs into a single, normalized API. It also led us to launch Merge Agent Handler, which replaces sprawling MCP servers with one secure layer to authenticate and operate across thousands of tools.

Today, we’ve seen a new kind of fragmentation emerge with LLMs. Companies like OpenAI, Anthropic, Google, xAI, Mistral, and dozens of others are constantly shipping new models.

For engineering teams, this fragmented infrastructure creates a familiar problem: you’re spending more time managing infrastructure than building your product.

We’re excited to announce our solution: Merge Gateway.

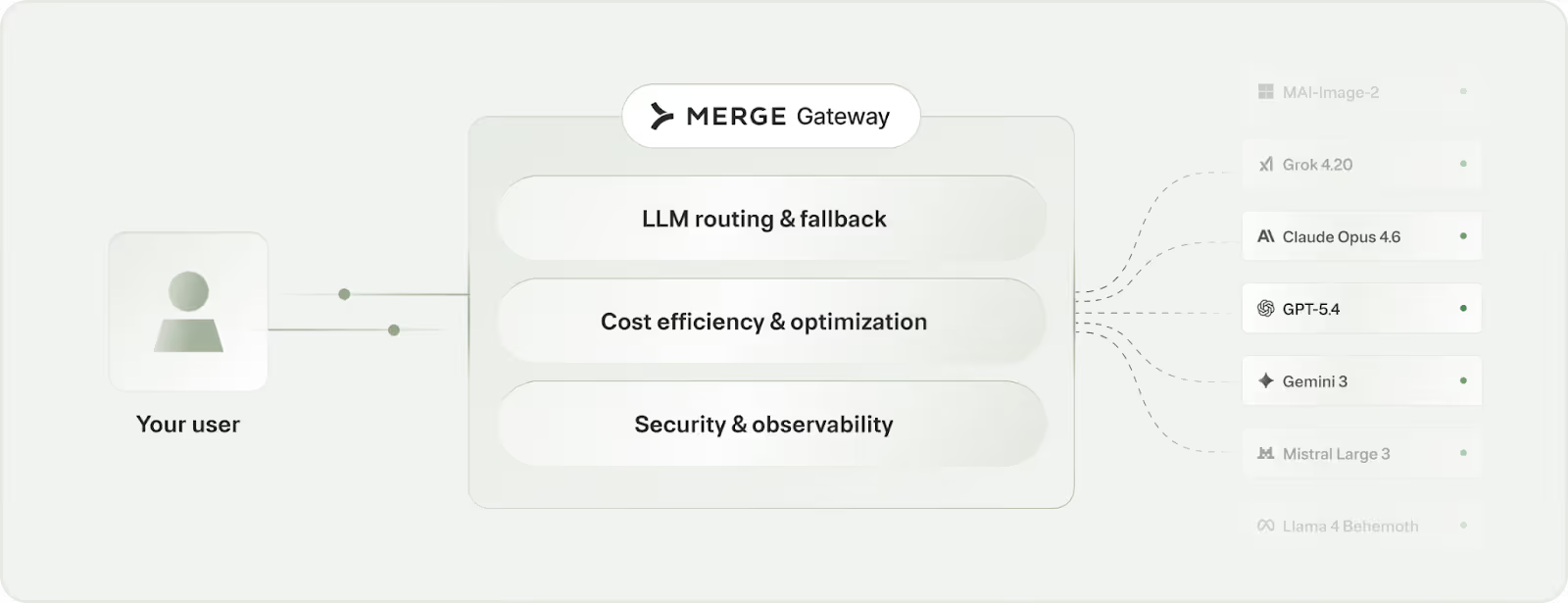

Merge Gateway is the control plane for running AI in production. It sits between your application and model providers, orchestrating how every request is routed, how costs are controlled, and how AI is used across your system.

What this looks like in practice

You can use Merge Gateway for a range of use cases, from smart model routing to cost controls to proactive security measures. Here are just a few examples:

- Route requests to the most cost-effective model:: When you receive requests that require deep research, Gateway auto-routes to a model like GPT-5.3 or Claude Opus 4.6 Sonnet; simpler requests get routed to a cheaper model, like GPT-5.3 mini or Claude Haiku 4.5. You can also configure the routing logic yourself, so you have flexibility over when premium models get used

- Budget enforcement: Say finance allocates a budget (e.g., $100/week) to a project in Gateway. Gateway then tracks real-time spend against this budget and enforces limits. Once that weekly budget is exhausted, additional requests are rejected until the budget resets (i.e., the week passes) or is increased

- Maintain zero downtime with automatic failover: If a model provider has an outage or starts erroring, Gateway automatically routes requests to another working model so your app keeps running. You can also set the logic that determines which providers and models to fail over to, in what order, and under what conditions

These aren't hypotheticals. They're the kinds of problems Gateway solves out of the box.

How Gateway works

Here’s a closer look at Gateway’s core capabilities.

Route to the right model

Access to models is only the beginning.

Gateway handles intelligent routing and automatic fallback.

Every request is routed to the optimal model based on latency, quality, and cost. If a provider degrades or goes down, traffic automatically reroutes to a healthy model. No manual intervention. No downtime.

Imagine a request is initially routed to OpenAI’s GPT-5.2.

If that model is down or hits a rate limit, Gateway falls back to your next preferred provider (like Claude Sonnet 4.6) based on the routing strategy you’ve defined.

Or let Gateway handle it entirely. Intelligent routing selects the optimal model for each request based on latency, cost, and performance in real time.

We also provide a simulator that helps you quickly spot differences in model performance before you ship to production.

More specifically, it lets you run your actual requests against multiple models side by side before anything reaches production. You can then compare outputs, spot regressions, and validate quality so model migrations and fallback configurations are based on evidence, not gut feel.

Slash LLM burn. Accelerate performance

LLM spend scales directly with usage, often without guardrails or visibility. And most teams don't realize how much they're overspending until the bill arrives.

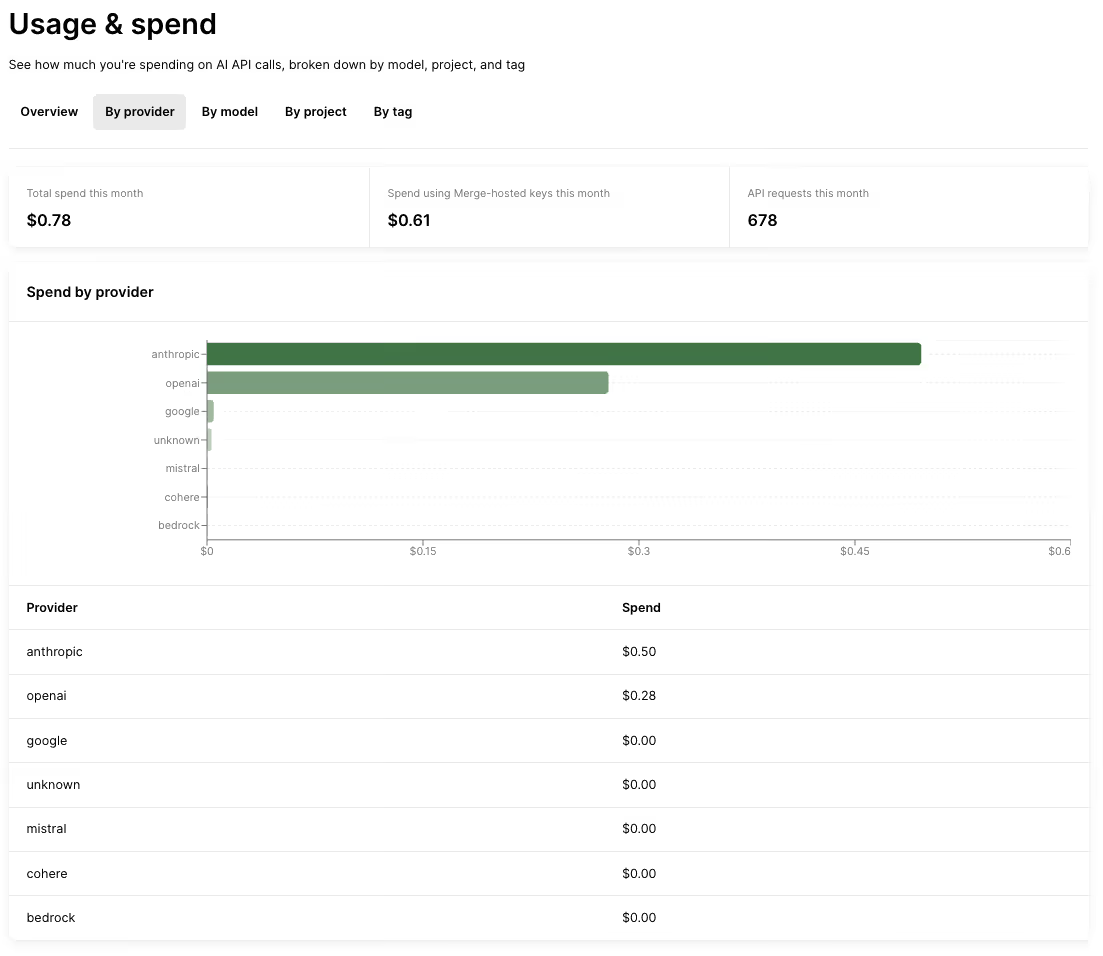

Merge Gateway lets you manage this spend effectively at the project level, which lets you cap spend on a specific feature, experiment, or workload.

But the real savings come from the optimization engine running in the background:

- Semantic caching reuses responses for semantically equivalent requests, cutting redundant token usage (note: this feature will launch in the next few weeks)

- Context compression reduces the size of inputs sent to models without degrading output quality. You can run context compression on every request, or only enable it when traffic is routed to cheaper models, typically via fallback

You can also track where every dollar goes by model, provider, project, and tag to quickly spot waste and focus on protecting your margins.

Since small inefficiencies compound into real budget risk as you ship AI to production, this level of visibility helps you catch problems early, enforce accountability across teams, and make smarter tradeoffs before costs start eating into margins.

It also gives you a clear picture of how AI is actually being used across teams and products, so you can spot adoption trends, benchmark performance, and invest in the use cases that deliver real impact.

Get full visibility into every LLM request

As AI moves into production, it can introduce operational risks that have a massive impact on your business.

This is the part most AI infrastructure tools skip. Routing and cost optimization matter, but they're not enough when AI is running in production at scale.

When sensitive data leaks, costs surge, and/or systems behave in unexplainable ways, there's no centralized record of what happened and why. These are governance failures, not infrastructure failures.

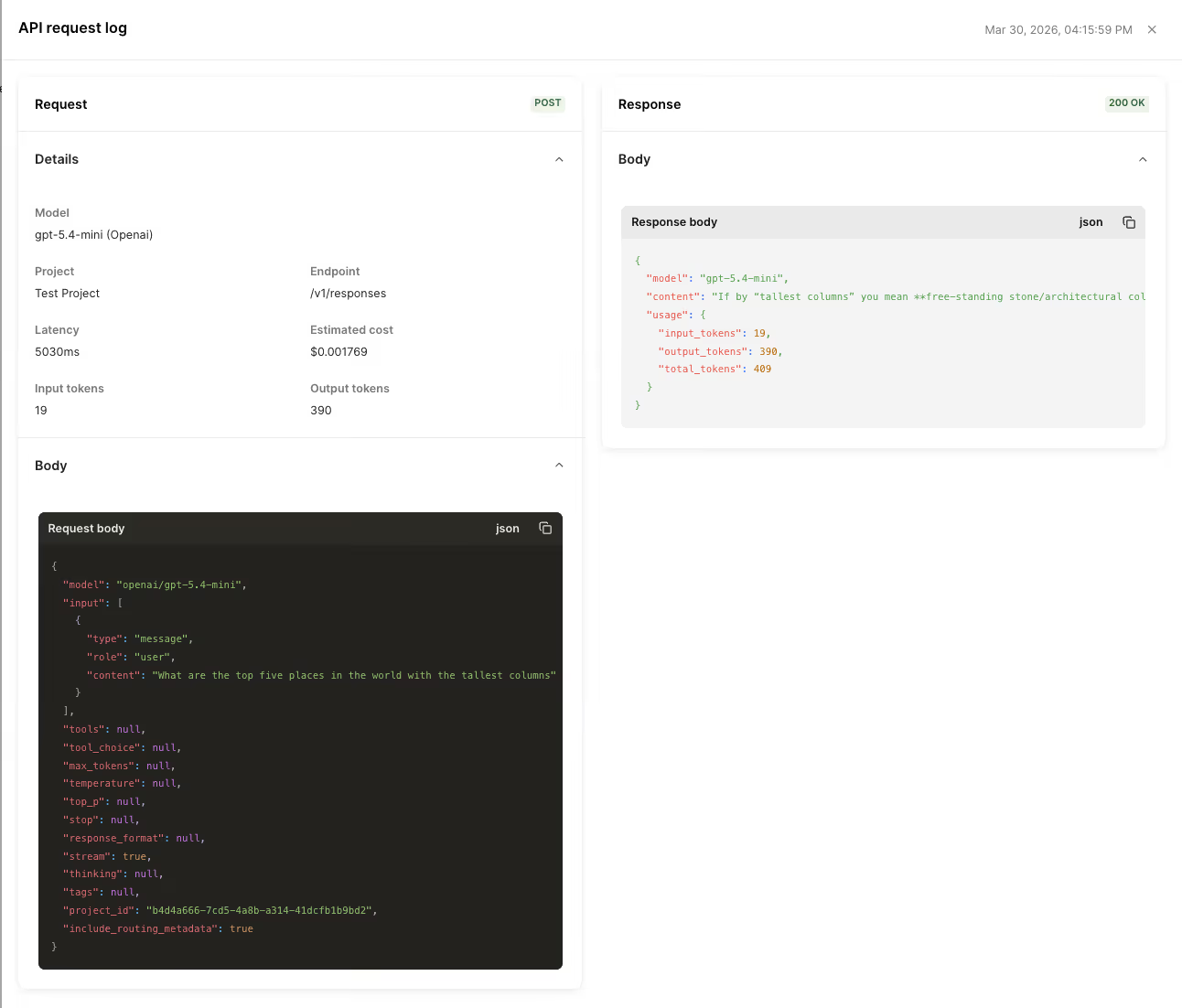

Merge Gateway solves this by centralizing governance at the infrastructure layer. Every request is logged with its routing decision, cost, latency, and policy outcome, giving teams full visibility into how AI behaves in production.

For engineering teams, this means complete observability from day one, not something you retrofit after an incident. And for security and compliance teams, it means a consistent, auditable record of every AI action across the organization.

We'll soon be releasing policy enforcement and data controls to strengthen this further.

How Gateway fits into the Merge platform

Thousands of companies already build AI products on Merge. Gateway is just the next layer.

Merge Unified gives AI products a single, normalized way to access and write data across hundreds of customer’ systems. Merge Agent Handler provides safe, agent-ready actions with scoped permissions and cross-system execution. And Gateway adds the control plane for LLM optimization that makes API and tool use reliable and efficient in production.

You can try out Merge Gateway for free or learn more in our docs. And if you’re already a Merge customer, you can reach out to your CSM for access.

.avif)

.png)

.png)